In the beginning stages of a web crawling project or when you have to scale to only a few hundred requests, you might want a simple proxy rotator that uses the free proxy pools available on the internet to populate itself periodically.

We can use a website like https://sslproxies.org/ to fetch public proxies every few minutes and use them in our Go projects.

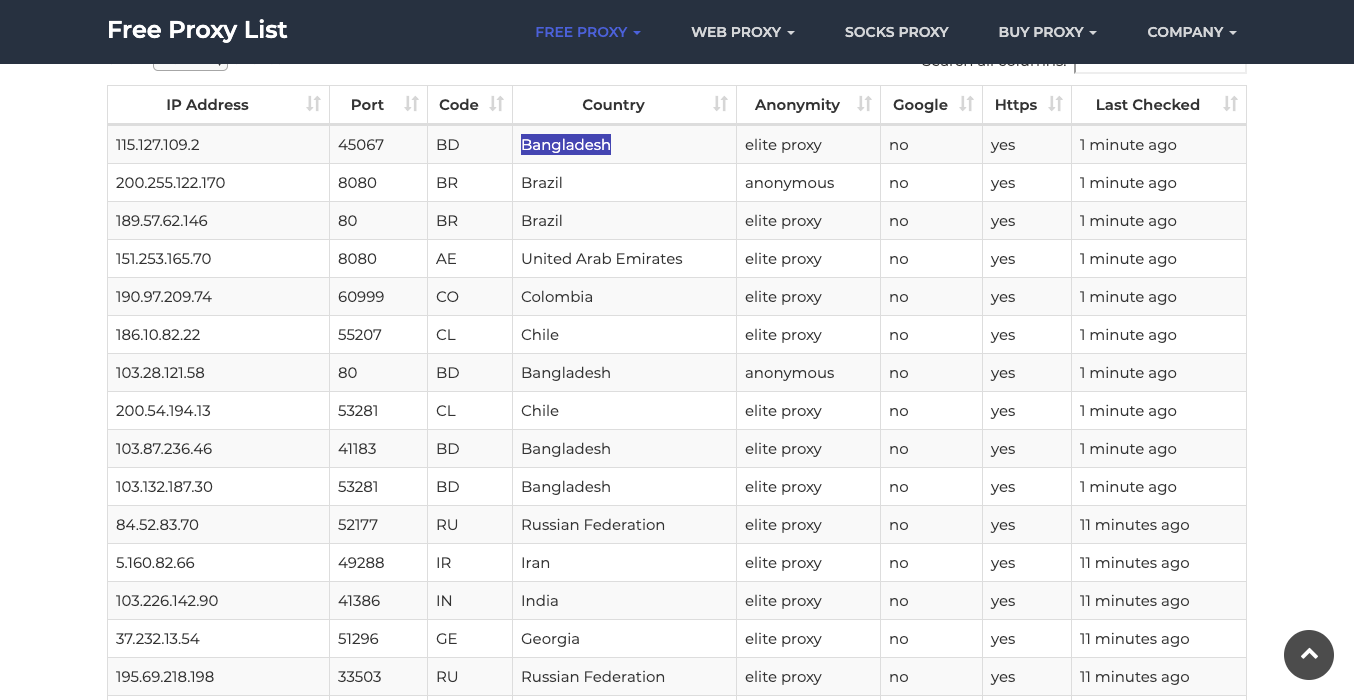

This is what the site looks like:

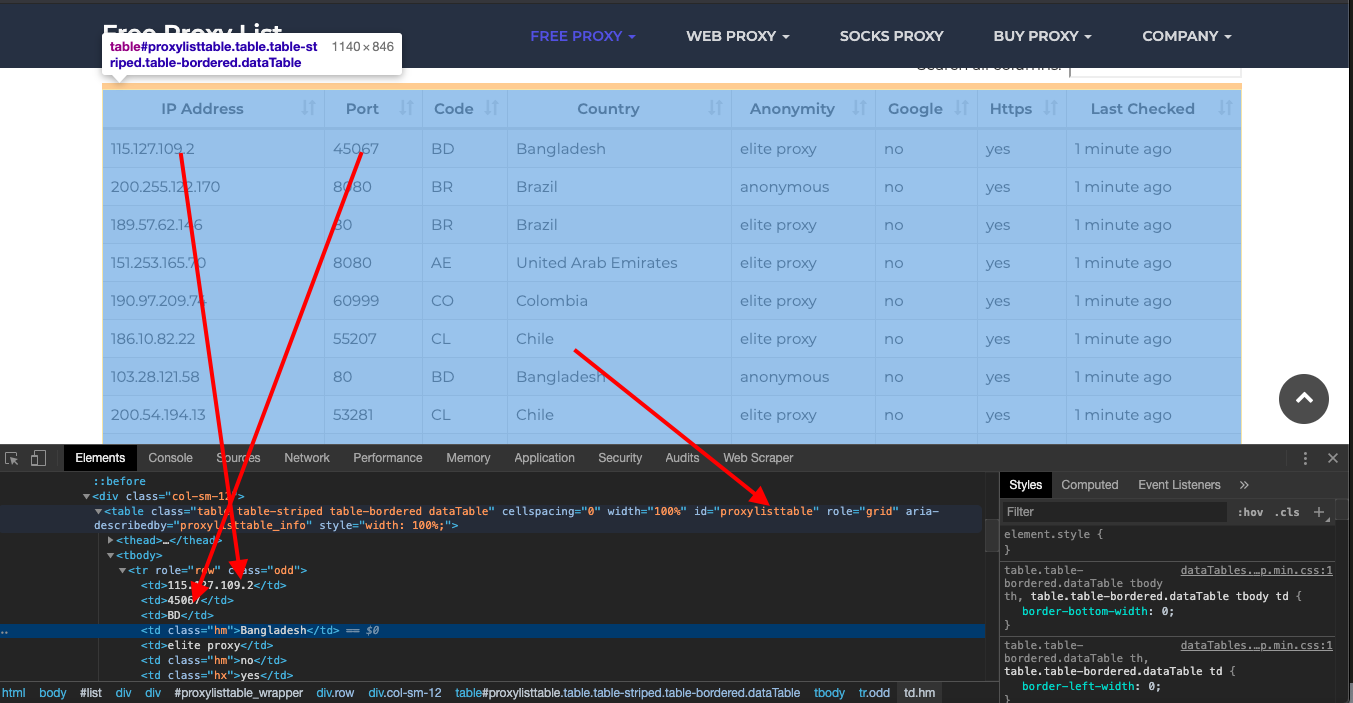

And if you check the HTML using the inspect tool, you will see the full content is encapsulated in a table with the id proxylisttable

The IP and port are the first and second elements in each row.

We can use the following code to select the table and its rows to iterate on and further pull out the first and second elements of the elements.

Fetching Proxies with Goquery

To scrape the proxy list, we'll use the Goquery library which provides jQuery-style DOM manipulation.

First, install Goquery:

go get github.com/PuerkitoBio/goquery

Then we can make a simple GET request to fetch the proxy list HTML:

package main

import (

"net/http"

"github.com/PuerkitoBio/goquery"

)

func main() {

url := "<https://sslproxies.org/>"

res, err := http.Get(url)

if err != nil {

panic(err)

}

defer res.Body.Close()

// Load HTML into Goquery

doc, err := goquery.NewDocumentFromReader(res.Body)

if err != nil {

panic(err)

}

// Query DOM...

}

Parsing the Proxy List

On the proxy list page, the IP and port are contained in the first and second We can select these elements and extract the text values: Here we iterate through each table row, find the relevant To refresh the proxies periodically, we can use Go's This will call To use a random proxy from the list for each request, we can select a random index: Then we can create a HTTP client using the chosen proxy: And make requests using this client: Here is the full code to fetch proxies on a schedule and make requests using random proxies: This provides a simple proxy rotator in Go that fetches new proxies periodically and selects them randomly for each request. With some additional error handling it can be integrated into a web scraping or crawling project. If you want to use this in production and want to scale to thousands of links, then you will find that many free proxies won't hold up under the speed and reliability requirements. In this scenario, using a rotating proxy service to rotate IPs is almost a must. Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms. Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly. Hundreds of our customers have successfully solved the headache of IP blocks with a simple API. A simple API can access the whole thing like below in any programming language. We have a running offer of 1000 API calls completely free. Register and get your free API Key here.

Get HTML from any page with a simple API call. We handle proxy rotation, browser identities, automatic retries, CAPTCHAs, JavaScript rendering, etc automatically for you

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com" <!doctype html> Enter your email below to claim your free API key: elements of each table row. proxies := []Proxy{}

doc.Find("#proxylisttable tbody tr").Each(func(i int, s *goquery.Selection) {

ip := s.Find("td:nth-child(1)").Text()

port := s.Find("td:nth-child(2)").Text()

proxy := Proxy{ip, port}

proxies = append(proxies, proxy)

})

elements, extract the text, and append a Fetching Proxies on a Schedule

ticker := time.NewTicker(2 * time.Minute)

for range ticker.C {

fetchProxies()

}

Using a Random Proxy

r := rand.Intn(len(proxies))

proxy := proxies[r]

client := &http.Client{

Transport: &http.Transport{

Proxy: http.ProxyURL(&url.URL{

Scheme: "http",

Host: proxy.ip + ":" + proxy.port,

}),

},

}

req, _ := http.NewRequest("GET", "<https://example.com>", nil)

resp, err := client.Do(req)

Complete Code

package main

import (

"net/http"

"time"

"math/rand"

"github.com/PuerkitoBio/goquery"

)

type Proxy struct {

IP string

Port string

}

var proxies []Proxy

func main() {

ticker := time.NewTicker(2 * time.Minute)

for range ticker.C {

fetchProxies()

}

// Make requests...

}

func fetchProxies() {

// Fetch proxy list HTML

// Parse with Goquery

// Populate proxies slice

}

func makeRequest() {

r := rand.Intn(len(proxies))

proxy := proxies[r]

// Create HTTP client with proxy

// Make request

}

curl "<http://api.proxiesapi.com/?key=API_KEY&url=https://example.com>"

Browse by language:

The easiest way to do Web Scraping

Try ProxiesAPI for free

<html>

<head>

<title>Example Domain</title>

<meta charset="utf-8" />

<meta http-equiv="Content-type" content="text/html; charset=utf-8" />

<meta name="viewport" content="width=device-width, initial-scale=1" />

...Don't leave just yet!