When starting a web crawling project or needing to scale to a few hundred requests, having a simple proxy rotator that populates itself from free proxy pools can be useful.

We can use a site like https://sslproxies.org/ to fetch public proxies periodically and use them in Scala projects.

Let's walk through a simple tutorial to do this in Scala. We'll be using ScalaJS to handle web scraping.

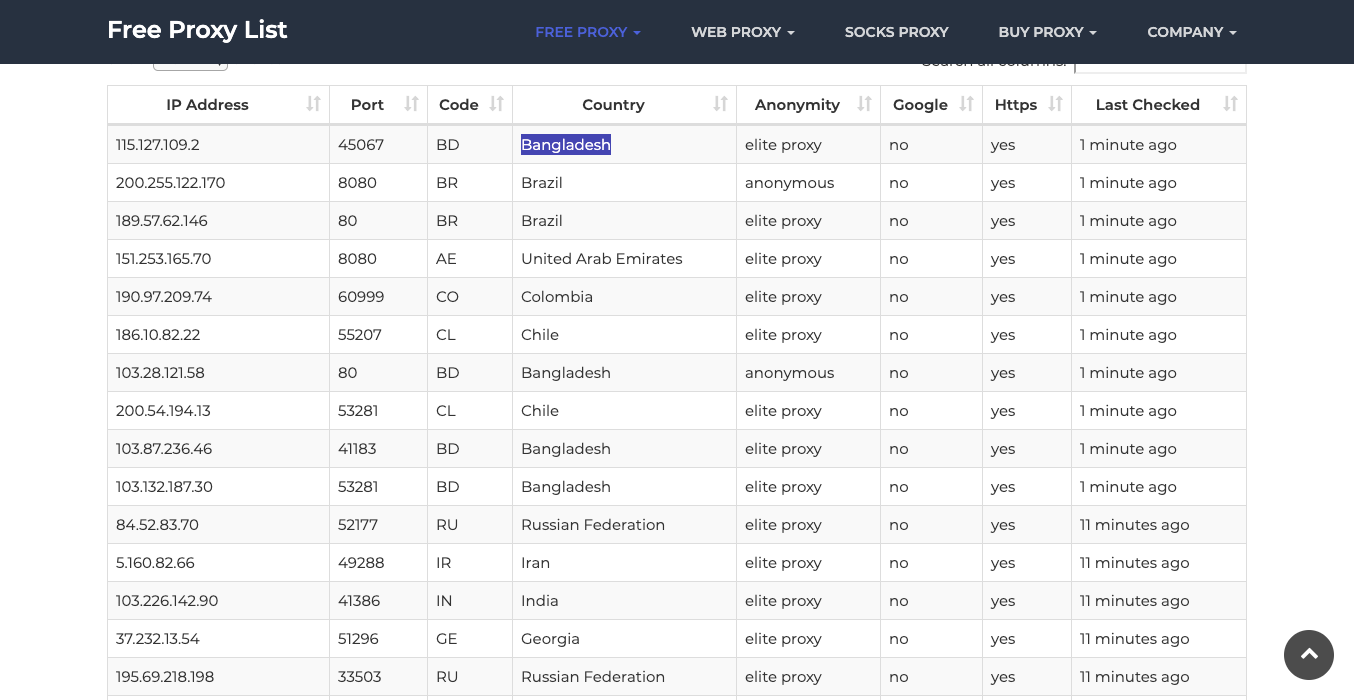

This is what the site looks like:

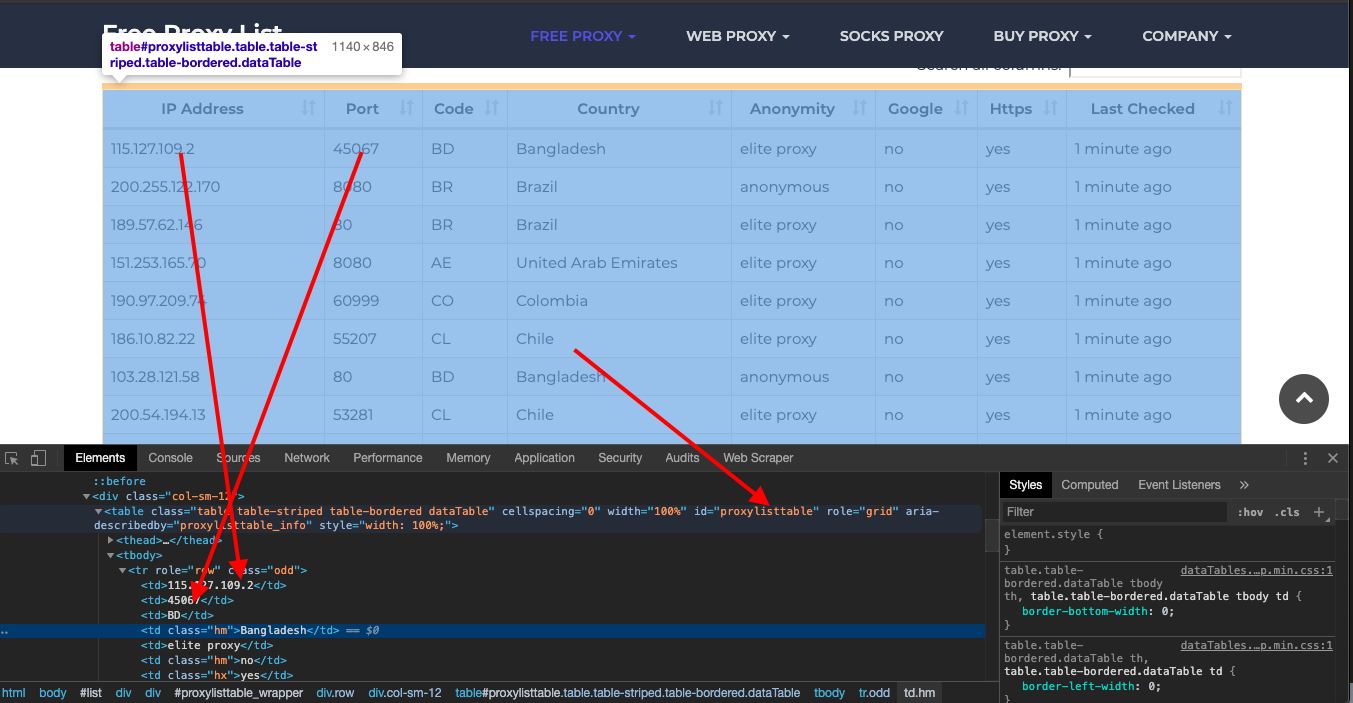

And if you check the HTML using the inspect tool, you will see the full content is encapsulated in a table with the id proxylisttable

The IP and port are the first and second elements in each row.

We can use the following code to select the table and its rows to iterate on and further pull out the first and second elements of the elements.

Setup

First, make sure you have SBT installed and create a new Scala project:

sbt new scala/scala-seed.g8

Add the ScalaJS dependencies:

libraryDependencies += "org.scala-js" %%% "scalajs-dom" % "1.1.0"

Also add the upickle dependency for JSON handling:

libraryDependencies += "com.lihaoyi" %%% "upickle" % "1.3.11"

Fetching Proxies

Let's define a simple function to fetch the HTML from the proxy site:

import org.scalajs.dom

import org.scalajs.dom.ext._

import upickle.default._

def fetchProxies(): String = {

val url = "<https://sslproxies.org/>"

val request = dom.ext.Ajax.get(url)

val response = request.responseText

response

}

This uses ScalaJS Ajax to send a GET request and returns the HTML response.

Parsing the Proxies

Next we can parse the HTML to extract the proxies. The proxies are in a table, so we can use ScalaJS DOM APIs to find the rows:

import org.scalajs.dom

def parseProxies(html: String): List[Map[String, String]] = {

val doc = dom.document.create.html(html)

val rows = doc.getElementById("proxylisttable").getElementsByTagName("tr")

rows.map{ row =>

Map(

"ip" -> row.childElement(0).textContent,

"port" -> row.childElement(1).textContent

)

}.toList

}

This finds the table, gets the rows, and extracts the IP and port for each row.

Putting It Together

We can wrap this in a function to fetch and parse the proxies:

import scala.util.Random

def getProxies(): List[Map[String, String]] = {

val html = fetchProxies()

val proxies = parseProxies(html)

proxies

}

def randomProxy(): Map[String, String] = {

val proxies = getProxies()

val randomIndex = Random.nextInt(proxies.length)

proxies(randomIndex)

}

To use a proxy:

val proxy = randomProxy()

// proxy hostname

proxy("ip")

// proxy port

proxy("port")

Scheduling Fetches

To populate proxies periodically, we can use a Scala scheduling library like

import akka.actor.ActorSystem

import akka.stream.scaladsl.Source

implicit val system = ActorSystem()

val fetchProxySource = Source.tick(0 seconds, 5 minutes, "Fetch")

fetchProxySource.mapAsync(1) {

case "Fetch" =>

getProxies()

Source.empty

}.runForeach( proxies =>

// save proxies

)

This fetches fresh proxies every 5 minutes.

Summary

This gives a simple Scala proxy rotator using ScalaJS for web scraping. For production use cases, a dedicated proxy service may be better for reliability and scale. But for small projects, this provides a handy way to avoid blocks with free proxy rotations.

The full code is:

import org.scalajs.dom

import org.scalajs.dom.ext._

import upickle.default._

import scala.util.Random

import akka.actor.ActorSystem

import akka.stream.scaladsl.Source

import scala.concurrent.duration._

object ProxyRotator {

implicit val system = ActorSystem()

def fetchProxies(): String = {

val url = "https://sslproxies.org/"

val request = dom.ext.Ajax.get(url)

val response = request.responseText

response

}

def parseProxies(html: String): List[Map[String, String]] = {

val doc = dom.document.create.html(html)

val rows = doc.getElementById("proxylisttable").getElementsByTagName("tr")

rows.map{ row =>

Map(

"ip" -> row.childElement(0).textContent,

"port" -> row.childElement(1).textContent

)

}.toList

}

def getProxies(): List[Map[String, String]] = {

val html = fetchProxies()

val proxies = parseProxies(html)

proxies

}

def randomProxy(): Map[String, String] = {

val proxies = getProxies()

val randomIndex = Random.nextInt(proxies.length)

proxies(randomIndex)

}

val fetchProxySource = Source.tick(0 seconds, 5 minutes, "Fetch")

fetchProxySource.mapAsync(1) {

case "Fetch" =>

getProxies()

Source.empty

}.runForeach(proxies =>

// save proxies

)

}

If you want to use this in production and want to scale to thousands of links, then you will find that many free proxies won't hold up under the speed and reliability requirements. In this scenario, using a rotating proxy service to rotate IPs is almost a must.

Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms.

Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly.

Hundreds of our customers have successfully solved the headache of IP blocks with a simple API.

A simple API can access the whole thing like below in any programming language.

curl "<http://api.proxiesapi.com/?key=API_KEY&url=https://example.com>"

We have a running offer of 1000 API calls completely free. Register and get your free API Key here.