In this beginner-friendly guide, we will go through how to use Scala to scrape all images from a website. We won't go into web scraping theory -- instead we'll focus on understanding and running real-world code that accomplishes this specific task of extracting images.

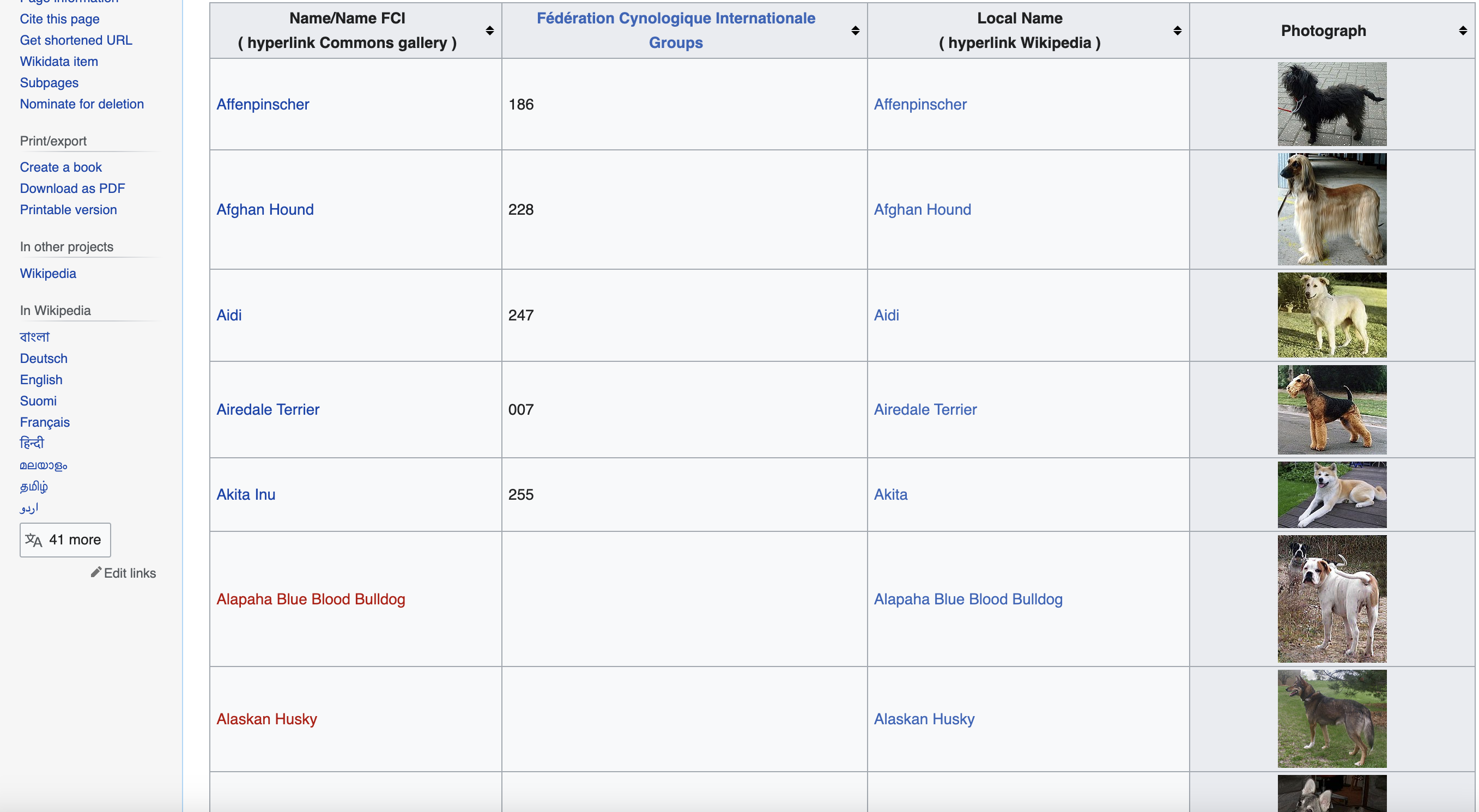

This is page we are talking about… We will be scraping images of dog breeds from Wikipedia

Prerequisites

To follow along, you'll need:

First, make sure Java and SBT are installed on your system. Then add the Jsoup dependency in your SBT build file:

libraryDependencies += "org.jsoup" % "jsoup" % "1.13.1"

Run

Now let's dive into the code!

Setup

We start by importing the required Scala and Java libraries:

import java.io.{File, FileOutputStream}

import org.jsoup.Jsoup

import scala.collection.JavaConverters._

The key one is Jsoup which we'll use for scraping the web page.

Next, we define the URL of the Wikipedia page we want to scrape:

val url = "<https://commons.wikimedia.org/wiki/List_of_dog_breeds>"

No modifications needed for the URL string. We hard-code it exactly as shown.

We also set a user agent header to simulate a real browser request:

val userAgent =

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36"

Preserving the user agent string literal here.

Making the HTTP Request

With the imports and variables defined, we can now use Jsoup to connect to the URL and get the HTML document:

val doc = Jsoup.connect(url).userAgent(userAgent).get()

The userAgent() method sets the custom header we defined earlier.

Selecting the Table Element

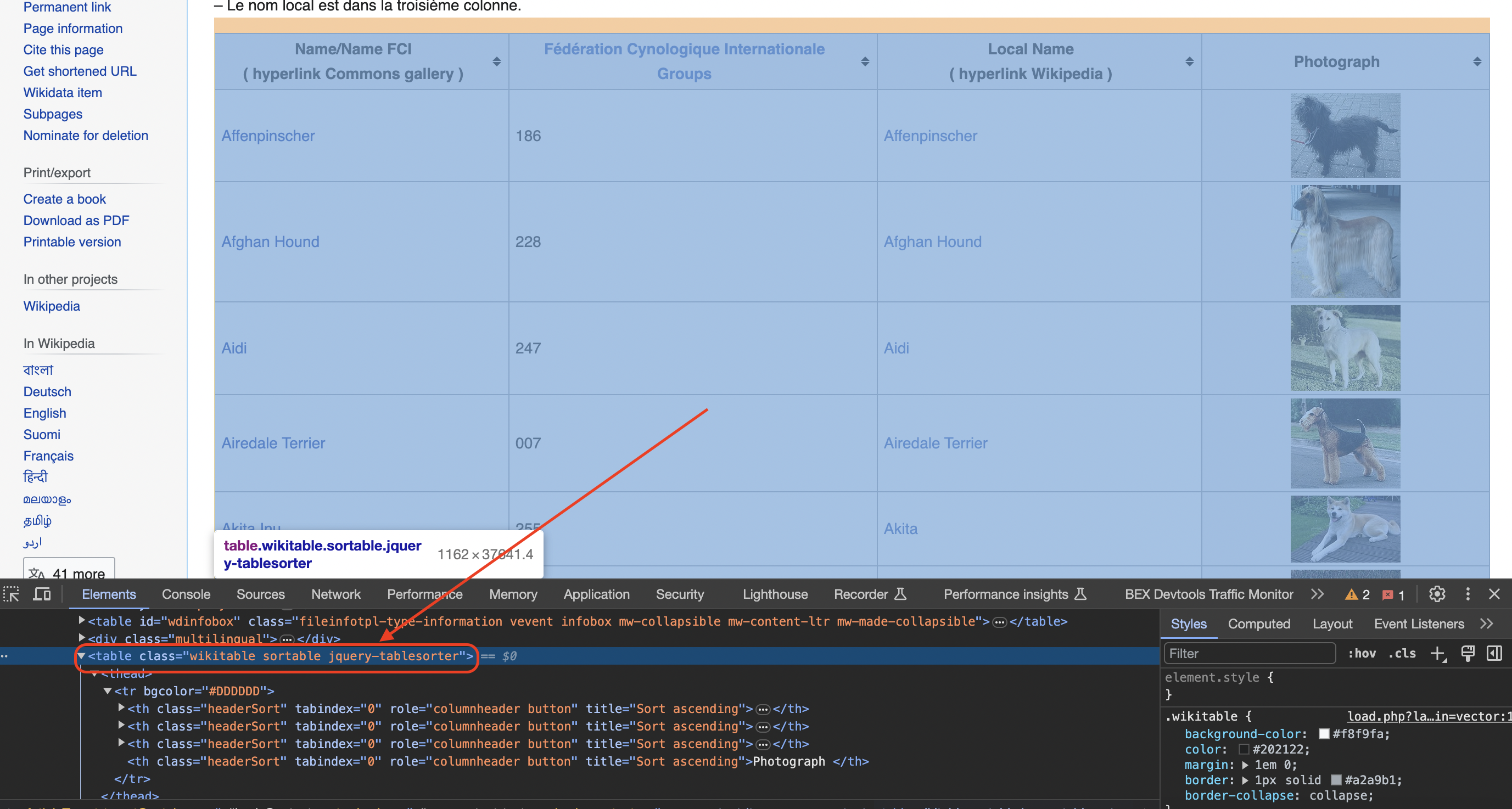

Inspecting the page

You can see when you use the chrome inspect tool that the data is in a table element with the class wikitable and sortable

We use a CSS selector to select the table with class names "wikitable" and "sortable":

val table = doc.select("table.wikitable.sortable").first()

The select() method finds all matching elements, and we take the first one using .first().

Initializing Storage Lists

With the target table element selected, we initialize some mutable Scala lists to store the extracted data:

val names = scala.collection.mutable.ListBuffer.empty[String]

val groups = scala.collection.mutable.ListBuffer.empty[String]

val localNames = scala.collection.mutable.ListBuffer.empty[String]

val photographs = scala.collection.mutable.ListBuffer.empty[String]

One list per data field we will scrape.

Creating Image Folder

Since we want to download all images from the page, we need a folder to save them:

val folder = new File("dog_images")

if (!folder.exists()) folder.mkdirs()

This creates a "dog_images" folder if it doesn't already exist.

Extracting Data from Table Rows

Now for the most complex part - extracting data within each row of the selected table element.

We loop through the rows, skipping the header:

for (row <- table.select("tr").asScala.drop(1)) {

// extract data from each row

}

Inside the loop, we select all "td" (normal cells) and "th" (header cells) within each row:

val columns = row.select("td, th").asScala.toSeq

And check that 4 columns were found - otherwise skip the row:

if (columns.size == 4) {

// extract data from each column

}

Extracting the Name

To extract the breed name, we select the anchor tag inside the first column:

val name = columns(0).select("a").text().trim

The select("a") finds the

We add the scraped name string to the respective list:

names += name

Extracting the Group

The group name exists directly as text in the second column. We extract it through:

val group = columns(1).text().trim

groups += group

Extracting the Local Name

Next, we check if there is a

val spanTag = columns(2).select("span").first()

val localName = if (spanTag != null) spanTag.text().trim else ""

If found, we get the text inside

And add to the localNames list:

localNames += localName

Extracting the Image

Finally, we check if there is an

val imgTag = columns(3).select("img").first()

val photograph = if (imgTag != null) imgTag.attr("src") else ""

If an image exists, we extract its src attribute to get the image URL.

Downloading and Saving Images

For each found image, we download and save it to our folder:

if (!photograph.isEmpty) {

val imageStream = Jsoup.connect(photograph)

.ignoreContentType(true)

.execute()

.bodyAsBytes()

val imageFile = new File(folder, s"${name}.jpg")

val fos = new FileOutputStream(imageFile)

fos.write(imageStream)

fos.close()

}

We use the breed name in each image filename.

And add the photograph URL to its list:

photographs += photograph

This whole process repeats for every row, extracting and storing data from each column.

Printing the Extracted Data

Finally, we can print out or process the scraped data as needed:

for (i <- names.indices) {

println("Name:", names(i))

println("FCI Group:", groups(i))

println("Local Name:", localNames(i))

println("Photograph:", photographs(i))

println()

}

So in summary, we:

- Made an HTTP request for the web page HTML

- Selected the data table element

- Initialized lists to store extracted data

- Looped through rows, extracting information

- Downloaded and saved images

- Printed/processed scraped data

Full Code

import java.io.{File, FileOutputStream}

import org.jsoup.Jsoup

import scala.collection.JavaConverters._

object DogBreedsScraper {

def main(args: Array[String]): Unit = {

// URL of the Wikipedia page

val url = "<https://commons.wikimedia.org/wiki/List_of_dog_breeds>"

// Define a user-agent header to simulate a browser request

val userAgent =

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36"

// Send an HTTP GET request to the URL with the headers

val doc = Jsoup.connect(url).userAgent(userAgent).get()

// Find the table with class 'wikitable sortable'

val table = doc.select("table.wikitable.sortable").first()

// Initialize lists to store the data

val names = scala.collection.mutable.ListBuffer.empty[String]

val groups = scala.collection.mutable.ListBuffer.empty[String]

val localNames = scala.collection.mutable.ListBuffer.empty[String]

val photographs = scala.collection.mutable.ListBuffer.empty[String]

// Create a folder to save the images

val folder = new File("dog_images")

if (!folder.exists()) folder.mkdirs()

// Iterate through rows in the table (skip the header row)

for (row <- table.select("tr").asScala.drop(1)) {

val columns = row.select("td, th").asScala.toSeq

if (columns.size == 4) {

// Extract data from each column

val name = columns(0).select("a").text().trim

val group = columns(1).text().trim

// Check if the second column contains a span element

val spanTag = columns(2).select("span").first()

val localName = if (spanTag != null) spanTag.text().trim else ""

// Check for the existence of an image tag within the fourth column

val imgTag = columns(3).select("img").first()

val photograph = if (imgTag != null) imgTag.attr("src") else ""

// Download the image and save it to the folder

if (!photograph.isEmpty) {

val imageStream = Jsoup.connect(photograph).ignoreContentType(true).execute().bodyAsBytes()

val imageFile = new File(folder, s"${name}.jpg")

val fos = new FileOutputStream(imageFile)

fos.write(imageStream)

fos.close()

}

// Append data to respective lists

names += name

groups += group

localNames += localName

photographs += photograph

}

}

// Print or process the extracted data as needed

for (i <- names.indices) {

println("Name:", names(i))

println("FCI Group:", groups(i))

println("Local Name:", localNames(i))

println("Photograph:", photographs(i))

println()

}

}

}

In more advanced implementations you will need to even rotate the User-Agent string so the website cant tell its the same browser!

If we get a little bit more advanced, you will realize that the server can simply block your IP ignoring all your other tricks. This is a bummer and this is where most web crawling projects fail.

Overcoming IP Blocks

Investing in a private rotating proxy service like Proxies API can most of the time make the difference between a successful and headache-free web scraping project which gets the job done consistently and one that never really works.

Plus with the 1000 free API calls running an offer, you have almost nothing to lose by using our rotating proxy and comparing notes. It only takes one line of integration to its hardly disruptive.

Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly.

Hundreds of our customers have successfully solved the headache of IP blocks with a simple API.

The whole thing can be accessed by a simple API like below in any programming language.

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com"We have a running offer of 1000 API calls completely free. Register and get your free API Key here.