Web scraping is the process of extracting data from websites automatically. This is useful when the site you want data from doesn't have an official API or database export option. For learning purposes, websites like Hacker News make great scraping targets since they have well-structured data.

In this beginner tutorial, we'll walk through a C# program that scrapes news articles from the Hacker News homepage. We'll cover:

By the end, you'll have a solid grasp of how web scrapers work!

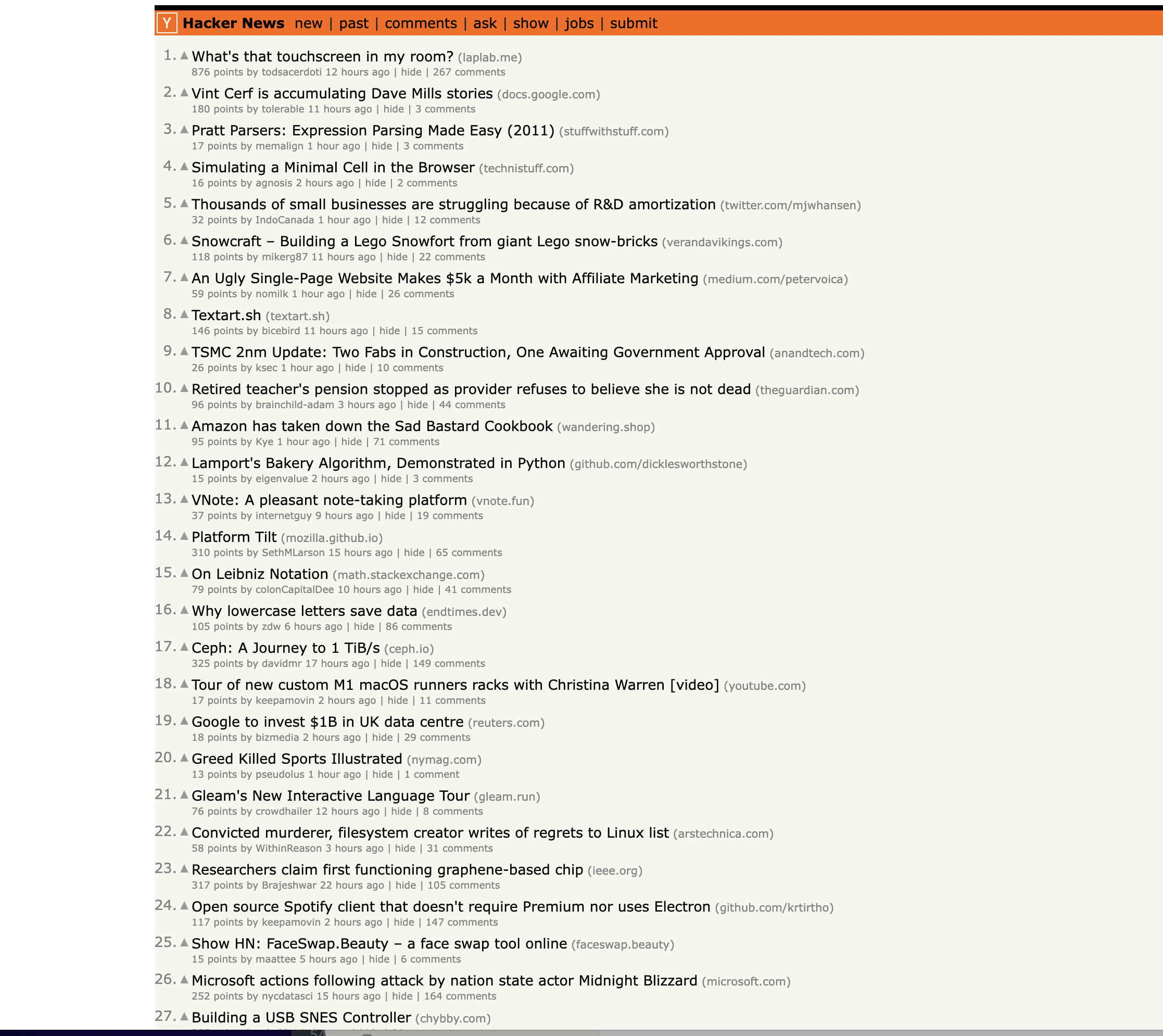

This is the page we are talking about…

Introduction to Hacker News

Hacker News is a popular social news site focused on computer science and entrepreneurship. Users can submit links to articles, upvote submissions they like, and comment.

Our program will scrape the front page, extracting details like article titles, scores, authors etc. The goal is to demonstrate web scraping concepts - we won't actually do anything with this data.

With some small tweaks, you could adapt this scraper to any site that uses tables/list layouts like Hacker News.

Let's get started!

Namespaces for HTTP Requests and HTML Parsing

C# namespaces allow our code to use classes defined elsewhere without needing to qualify them. This scraper uses two external namespaces:

using System.Net.Http;

using HtmlAgilityPack;

The

The Program Class

Our code goes inside a class named

class Program

{

// Code here

}

And specifically in the

static async Task Main(string[] args)

{

// Code here

}

This is a common pattern for C# command line programs. Code goes inside

Defining the URL

First we set the target URL to scrape:

string url = "<https://news.ycombinator.com/>";

This is the homepage URL for Hacker News. We could scrape any webpage by changing this URL.

Initializing the HttpClient

using (HttpClient client = new HttpClient())

{

// Send request

}

This initializes an

Using a

Sending the GET Request

To fetch the web page HTML, we use

HttpResponseMessage response = await client.GetAsync(url);

We

Checking for Success

It's good practice to verify requests succeeded before processing further:

if (response.IsSuccessStatusCode)

{

// Process response

}

else

{

Console.WriteLine("Request failed!");

}

The status code property gives the HTTP response status. We want status 200 OK.

This avoids errors from trying to parse failed responses.

Reading the Response HTML

To access the HTML body, we extract the content as a string:

string html = await response.Content.ReadAsStringAsync();

The

Loading the HTML into HtmlDocument

Before we can query elements, the HTML needs parsing into a DOM document object:

HtmlDocument doc = new HtmlDocument();

doc.LoadHtml(html);

Finding Row Nodes

Inspecting the page

You can notice that the items are housed inside a With the DOM loaded, we can use XPath queries to select elements: This selects all Because upcoming logic depends on which row is current, we define flags to track state: These will be updated on each loop iteration. We can now iterate through the rows, identifying what each one contains: The approach: This takes advantage of the well-structured table layout. We first check if a row contains an article using a class attribute: Article rows have CSS class The details about an article are in the next row: If state shows previous row was an article, current row holds the additional details. We null the state variables once handled to prepare for the next potential article. Inside the article block, we can pluck data using more specific XPath queries. Here we: This will have the article title. Similarly for URL: Instead of text, we get the anchor's The general pattern is: For example: The key is uniquely identifying elements using classes, attribute filters, nested selection etc. With data parsed from each article row and details row, we can print the results: And loop back to the next article! This outputs the scraped data to the console. The full program is below for reference. With this foundation, you could: The concepts learned here apply to almost any web scraping project! Here is the complete code for reference: This is great as a learning exercise but it is easy to see that even the proxy server itself is prone to get blocked as it uses a single IP. In this scenario where you may want a proxy that handles thousands of fetches every day using a professional rotating proxy service to rotate IPs is almost a must. Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms. Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly. Hundreds of our customers have successfully solved the headache of IP blocks with a simple API. The whole thing can be accessed by a simple API like below in any programming language. In fact, you don't even have to take the pain of loading Puppeteer as we render Javascript behind the scenes and you can just get the data and parse it any language like Node, Puppeteer or PHP or using any framework like Scrapy or Nutch. In all these cases you can just call the URL with render support like so: We have a running offer of 1000 API calls completely free. Register and get your free API Key.

Get HTML from any page with a simple API call. We handle proxy rotation, browser identities, automatic retries, CAPTCHAs, JavaScript rendering, etc automatically for you

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com" <!doctype html> Enter your email below to claim your free API key: tag with the class athing

var rows = doc.DocumentNode

.SelectNodes("//tr");

nodes within Tracking State as We Iterate Rows

HtmlNode currentArticle = null;

string currentRowType = null;

Looping Through Rows

foreach (var row in rows)

{

// Check row type

// If article row:

// Extract article data from row & details row

// Update current states

}

Identifying Article Rows

if (row.GetAttributeValue("class", "") == "athing")

{

// This is an article row

currentArticle = row;

currentRowType = "article";

}

Identifying Details Rows

else if (currentRowType == "article")

{

// This is a details row

// Extract details

currentArticle = null;

currentRowType = null;

}

Extracting Article Data

Title

var titleElem = currentArticle.SelectSingleNode(".//span[@class='title']");

if (titleElem != null)

{

string title = titleElem.Element("a").InnerText;

}

URL

string url = titleElem.Element("a").GetAttributeValue("href", "");

Points, Author, Other Data

// Select span containing score as inner text

var pointsSpan = doc.SelectSingleNode(".//span[@class='score']");

string points = pointsSpan.InnerText;

// Select containing author link then get anchor text

var authorAnchor = doc.SelectSingleNode(".//a[@class='hnuser']");

string author = authorAnchor.InnerText;

// Other examples

string comments = commentsElem.InnerText;

string timestamp = elem.GetAttributeValue("title");

Printing Extracted Data

Title: {title}

URL: {url}

Points: {points}

Author: {author}

...

Next Steps

Full Hacker News Scraper Code

using System;

using System.Linq;

using System.Net.Http;

using HtmlAgilityPack;

class Program

{

static async System.Threading.Tasks.Task Main(string[] args)

{

// Define the URL of the Hacker News homepage

string url = "https://news.ycombinator.com/";

// Initialize HttpClient

using (HttpClient client = new HttpClient())

{

// Send a GET request to the URL

HttpResponseMessage response = await client.GetAsync(url);

// Check if the request was successful (status code 200)

if (response.IsSuccessStatusCode)

{

// Read the HTML content of the page

string htmlContent = await response.Content.ReadAsStringAsync();

// Load the HTML content into an HtmlDocument

HtmlDocument doc = new HtmlDocument();

doc.LoadHtml(htmlContent);

// Find all rows in the table

var rows = doc.DocumentNode.SelectNodes("//tr");

// Initialize variables to keep track of the current article and row type

HtmlNode currentArticle = null;

string currentRowType = null;

// Iterate through the rows to scrape articles

foreach (var row in rows)

{

if (row.GetAttributeValue("class", "") == "athing")

{

// This is an article row

currentArticle = row;

currentRowType = "article";

}

else if (currentRowType == "article")

{

// This is the details row

if (currentArticle != null)

{

// Extract information from the current article and details row

var titleElem = currentArticle.SelectSingleNode(".//span[@class='title']");

if (titleElem != null)

{

string articleTitle = titleElem.Element("a").InnerText;

string articleUrl = titleElem.Element("a").GetAttributeValue("href", "");

var subtext = row.SelectSingleNode(".//td[@class='subtext']");

string points = subtext.SelectSingleNode(".//span[@class='score']").InnerText;

string author = subtext.SelectSingleNode(".//a[@class='hnuser']").InnerText;

string timestamp = subtext.SelectSingleNode(".//span[@class='age']").GetAttributeValue("title", "");

var commentsElem = subtext.SelectSingleNode(".//a[contains(text(),'comments')]");

string comments = commentsElem != null ? commentsElem.InnerText : "0";

// Print the extracted information

Console.WriteLine("Title: " + articleTitle);

Console.WriteLine("URL: " + articleUrl);

Console.WriteLine("Points: " + points);

Console.WriteLine("Author: " + author);

Console.WriteLine("Timestamp: " + timestamp);

Console.WriteLine("Comments: " + comments);

Console.WriteLine(new string('-', 50)); // Separating articles

}

}

// Reset the current article and row type

currentArticle = null;

currentRowType = null;

}

else if (row.GetAttributeValue("style", "") == "height:5px")

{

// This is the spacer row, skip it

continue;

}

}

}

else

{

Console.WriteLine("Failed to retrieve the page. Status code: " + (int)response.StatusCode);

}

}

}

}

curl "<http://api.proxiesapi.com/?key=API_KEY&render=true&url=https://example.com>"

Browse by language:

The easiest way to do Web Scraping

Try ProxiesAPI for free

<html>

<head>

<title>Example Domain</title>

<meta charset="utf-8" />

<meta http-equiv="Content-type" content="text/html; charset=utf-8" />

<meta name="viewport" content="width=device-width, initial-scale=1" />

...Don't leave just yet!