Let's take a practical look at how to scrape articles from the Hacker News homepage using PHP and the Simple HTML DOM library. We'll walk through the code step-by-step to understand how it works under the hood.

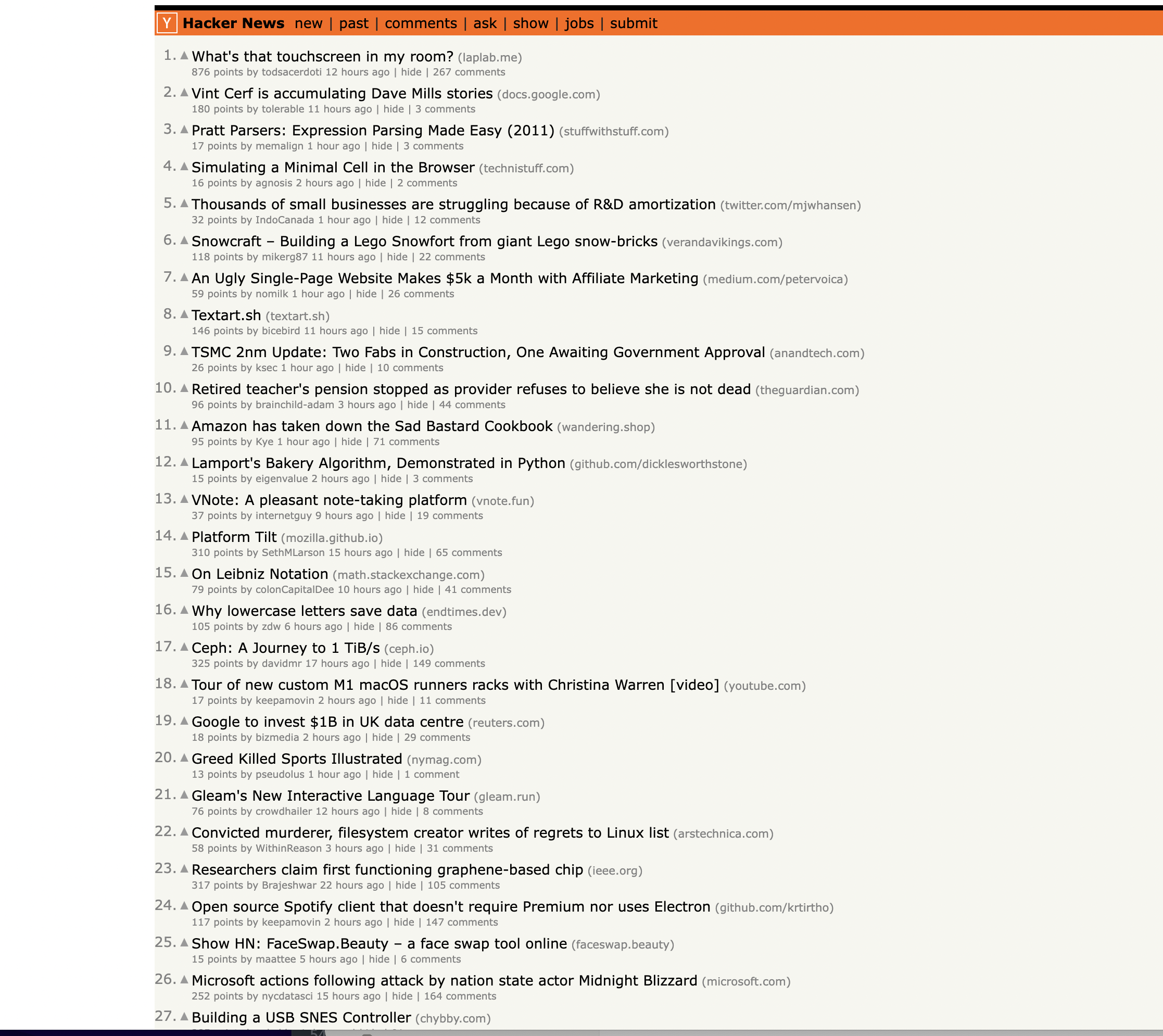

This is the page we are talking about…

Overview

The goal here is to extract key data from Hacker News article listings, like the title, URL, points, author, etc. To achieve this, we:

- Import SimpleHTMLDom for parsing

- Define the Hacker News homepage URL

- Send a GET request and check if it succeeded

- Find all table rows on the page

- Iterate through rows, identifying article rows

- For each article row, use selectors to extract data into variables

- Print the scraped data

Now let's dive into the details...

Importing the HTML Parser

We'll use SimpleHTMLDom to parse and traverse the HN homepage:

require_once('simple_html_dom.php');

This imports the library so we can instantiate HTML DOM objects.

Defining the URL

We'll scrape the default HackerNews homepage at:

$url = "<https://news.ycombinator.com/>";

This URL can be configured to any page you want to scrape.

Sending the GET Request

To download the page content, we send a GET request and store it in

$response = file_get_html($url);

The

Checking for Success

We verify that the request succeeded before trying to parse the page content:

if ($response) {

// scraping code here

} else {

echo "Failed to retrieve the page.";

}

This is good practice to avoid errors when network issues or other problems occur.

Finding All Table Rows

HackerNews uses a table structure, so we first find all Inspecting the page You can notice that the items are housed inside a This locates table rows so we can loop through them to isolate article rows. As we iterate over rows, we need to track some state: This state is used in scraping logic. We loop through rows to identify article rows and accompanying details: The key parts happen inside this loop... We first check if a row is an article using its class: Article rows have CSS class If the previous row was an article, we know the next row contains article details: We reset the state afterward so subsequent rows are processed correctly. Inside the details row, we use selectors to extract information: Breaking this down: Every data field is extracted by: Preserving the exact selectors used is essential for the code to function. The key things that might confuse beginners are... You may wonder why selectors like The reasons are: So detailed selectors make the scraper more robust and accurate. Selectors often stack together like: The order and indexes matter. Here's what's happening: This lets us drill down gradually in a nested structure to zone in on the target element. Finally, after extraction back in the loop, we can print the article data: Outputting each field lets us see the successfully scraped data. To visually separate article data, we add a divider: The scraper will continue looping through and extracting all articles on the page. And that's the overview of how this Hacker News scraping script works! Let's look at the full code now... Here is the complete code to scrape the Hacker News homepage: This is great as a learning exercise but it is easy to see that even the proxy server itself is prone to get blocked as it uses a single IP. In this scenario where you may want a proxy that handles thousands of fetches every day using a professional rotating proxy service to rotate IPs is almost a must. Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms. Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly. Hundreds of our customers have successfully solved the headache of IP blocks with a simple API. The whole thing can be accessed by a simple API like below in any programming language. In fact, you don't even have to take the pain of loading Puppeteer as we render Javascript behind the scenes and you can just get the data and parse it any language like Node, Puppeteer or PHP or using any framework like Scrapy or Nutch. In all these cases you can just call the URL with render support like so: We have a running offer of 1000 API calls completely free. Register and get your free API Key.

Get HTML from any page with a simple API call. We handle proxy rotation, browser identities, automatic retries, CAPTCHAs, JavaScript rendering, etc automatically for you

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com" <!doctype html> Enter your email below to claim your free API key: elements: tag with the class athing

$rows = $response->find("tr");

Tracking State

$current_article = null;

$current_row_type = null;

Looping Over Rows

foreach ($rows as $row) {

// scraping logic here

}

Identifying Article Rows

if ($row->class == "athing") {

$current_article = $row;

$current_row_type = "article";

}

Identifying Details Rows

} elseif ($current_row_type == "article") {

// extract details here

$current_article = null;

$current_row_type = null;

}

Extracting Article Data

$title_elem = $current_article->find("span.titleline", 0);

$article_title = $title_elem->find("a", 0)->plaintext;

$article_url = $title_elem->find("a", 0)->href;

Why Such Specific Selectors?

Selector Order and Indexes

$elem = $row->find("x", 0)->find("y", 0);

Printing Extracted Data

echo "Title: " . $article_title . "\\n";

echo "URL: " . $article_url . "\\n";

Separating Articles

echo str_repeat("-", 50) . "\\n";

Full Code

<?php

// Include the SimpleHTMLDom library

require_once('simple_html_dom.php');

// Define the URL of the Hacker News homepage

$url = "https://news.ycombinator.com/";

// Send a GET request to the URL

$response = file_get_html($url);

// Check if the request was successful

if ($response) {

// Find all rows in the table

$rows = $response->find("tr");

// Initialize variables to keep track of the current article and row type

$current_article = null;

$current_row_type = null;

// Iterate through the rows to scrape articles

foreach ($rows as $row) {

if ($row->class == "athing") {

// This is an article row

$current_article = $row;

$current_row_type = "article";

} elseif ($current_row_type == "article") {

// This is the details row

if ($current_article) {

$title_elem = $current_article->find("span.titleline", 0);

if ($title_elem) {

$article_title = $title_elem->find("a", 0)->plaintext;

$article_url = $title_elem->find("a", 0)->href;

$subtext = $row->find("td.subtext", 0);

$points = trim($subtext->find("span.score", 0)->plaintext);

$author = trim($subtext->find("a.hnuser", 0)->plaintext);

$timestamp = $subtext->find("span.age", 0)->title;

$comments_elem = $subtext->find("a", 0, true);

$comments = "0";

foreach ($comments_elem as $element) {

if (strpos($element->plaintext, 'comment') !== false) {

$comments = trim($element->plaintext);

break;

}

}

// Print the extracted information

echo "Title: " . $article_title . "\n";

echo "URL: " . $article_url . "\n";

echo "Points: " . $points . "\n";

echo "Author: " . $author . "\n";

echo "Timestamp: " . $timestamp . "\n";

echo "Comments: " . $comments . "\n";

echo str_repeat("-", 50) . "\n"; // Separating articles

}

}

// Reset the current article and row type

$current_article = null;

$current_row_type = null;

} elseif ($row->style == "height:5px") {

// This is the spacer row, skip it

continue;

}

}

} else {

echo "Failed to retrieve the page.";

}curl "<http://api.proxiesapi.com/?key=API_KEY&render=true&url=https://example.com>"

Browse by language:

The easiest way to do Web Scraping

Try ProxiesAPI for free

<html>

<head>

<title>Example Domain</title>

<meta charset="utf-8" />

<meta http-equiv="Content-type" content="text/html; charset=utf-8" />

<meta name="viewport" content="width=device-width, initial-scale=1" />

...Don't leave just yet!