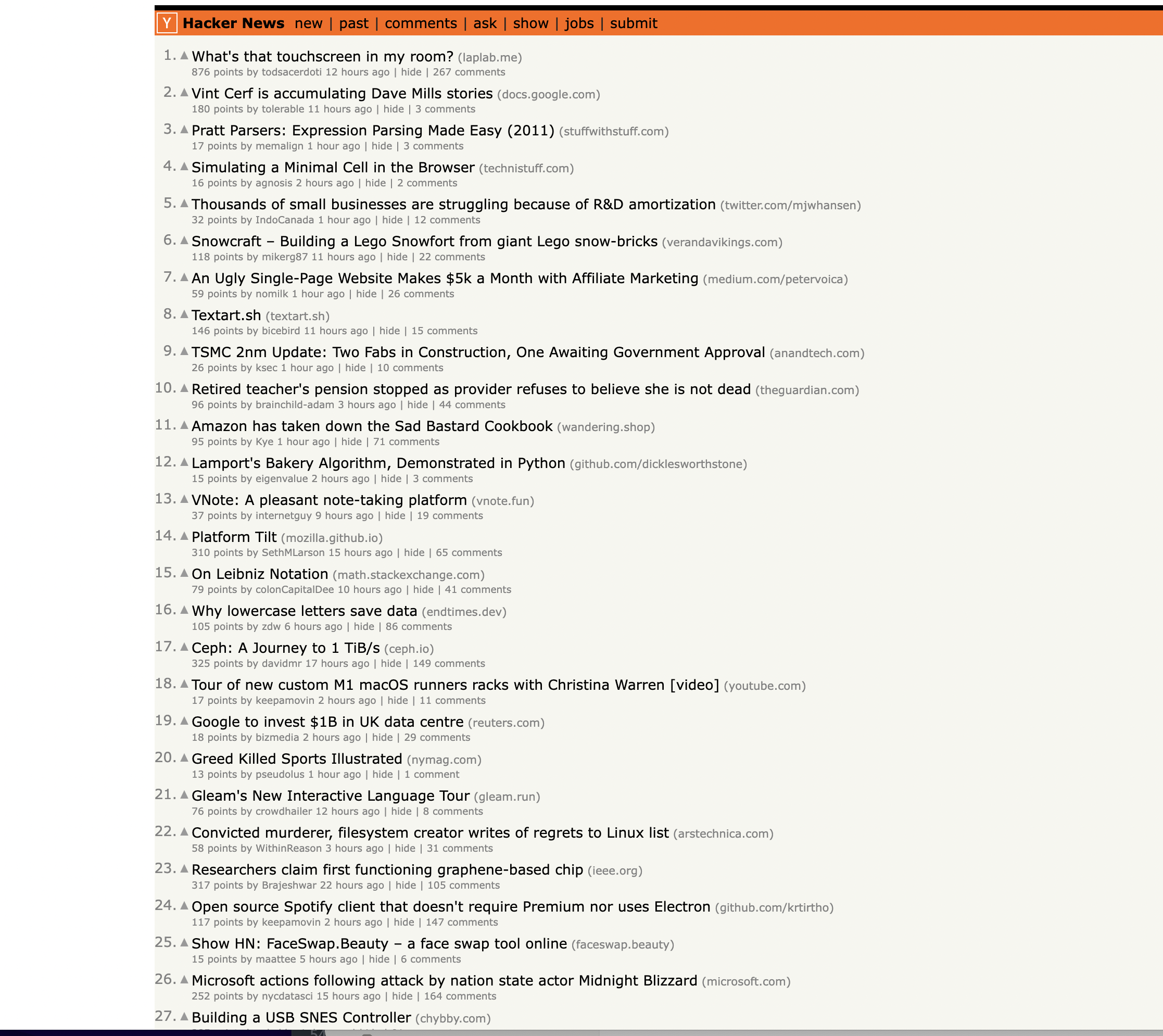

Web scraping is a technique for extracting information from websites. In this beginner Scala tutorial, we'll walk through code that scrapes article data from the Hacker News homepage using the Jsoup Java library.

This is the page we are talking about…

Installation

First, ensure Jsoup is installed by adding the following Maven dependency to your project:

<dependency>

<groupId>org.jsoup</groupId>

<artifactId>jsoup</artifactId>

<version>1.13.1</version>

</dependency>

Overview

Here is the full code we'll be going through:

import org.jsoup.Jsoup

object HackerNewsScraper {

def main(args: Array[String]): Unit = {

// Define the URL of the Hacker News homepage

val url = "<https://news.ycombinator.com/>"

// Send a GET request to the URL and parse the HTML content

val doc = Jsoup.connect(url).get()

// Find all rows in the table

val rows = doc.select("tr")

// Initialize variables to keep track of the current article and row type

var currentArticle: org.jsoup.nodes.Element = null

var currentRowType: String = null

// Iterate through the rows to scrape articles

for (row <- rows) {

if (row.hasClass("athing")) {

// This is an article row

currentArticle = row

currentRowType = "article"

} else if (currentRowType == "article") {

// This is the details row

if (currentArticle != null) {

// Extract information from the current article and details row

val titleElem = currentArticle.select("span.titleline")

if (titleElem != null && titleElem.size() > 0) {

val articleTitle = titleElem.select("a").text() // Get the text of the anchor element

val articleUrl = titleElem.select("a").attr("href") // Get the href attribute of the anchor element

val subtext = row.select("td.subtext")

val points = subtext.select("span.score").text()

val author = subtext.select("a.hnuser").text()

val timestamp = subtext.select("span.age").attr("title")

val commentsElem = subtext.select("a:contains(comments)")

val comments = if (commentsElem != null && commentsElem.size() > 0) commentsElem.text() else "0"

// Print the extracted information

println("Title: " + articleTitle)

println("URL: " + articleUrl)

println("Points: " + points)

println("Author: " + author)

println("Timestamp: " + timestamp)

println("Comments: " + comments)

println("-" * 50) // Separating articles

}

}

// Reset the current article and row type

currentArticle = null

currentRowType = null

} else if (row.attr("style") == "height:5px") {

// This is the spacer row, skip it

// do nothing

}

}

}

}

In the rest of this article, we'll break down what each section is doing.

Importing Jsoup

We first import Jsoup, which is the Java library that allows us to make HTTP requests and parse HTML:

import org.jsoup.Jsoup

Defining the Entry Point

Next we define a Scala object with a

object HackerNewsScraper {

def main(args: Array[String]): Unit = {

// scraping code goes here

}

}

Getting the Hacker News Page HTML

Inside the main method, we start by defining the URL of the Hacker News homepage:

val url = "<https://news.ycombinator.com/>"

We then use Jsoup to send a GET request to this URL and parse/load the returned HTML content:

val doc = Jsoup.connect(url).get()

The

Selecting Rows

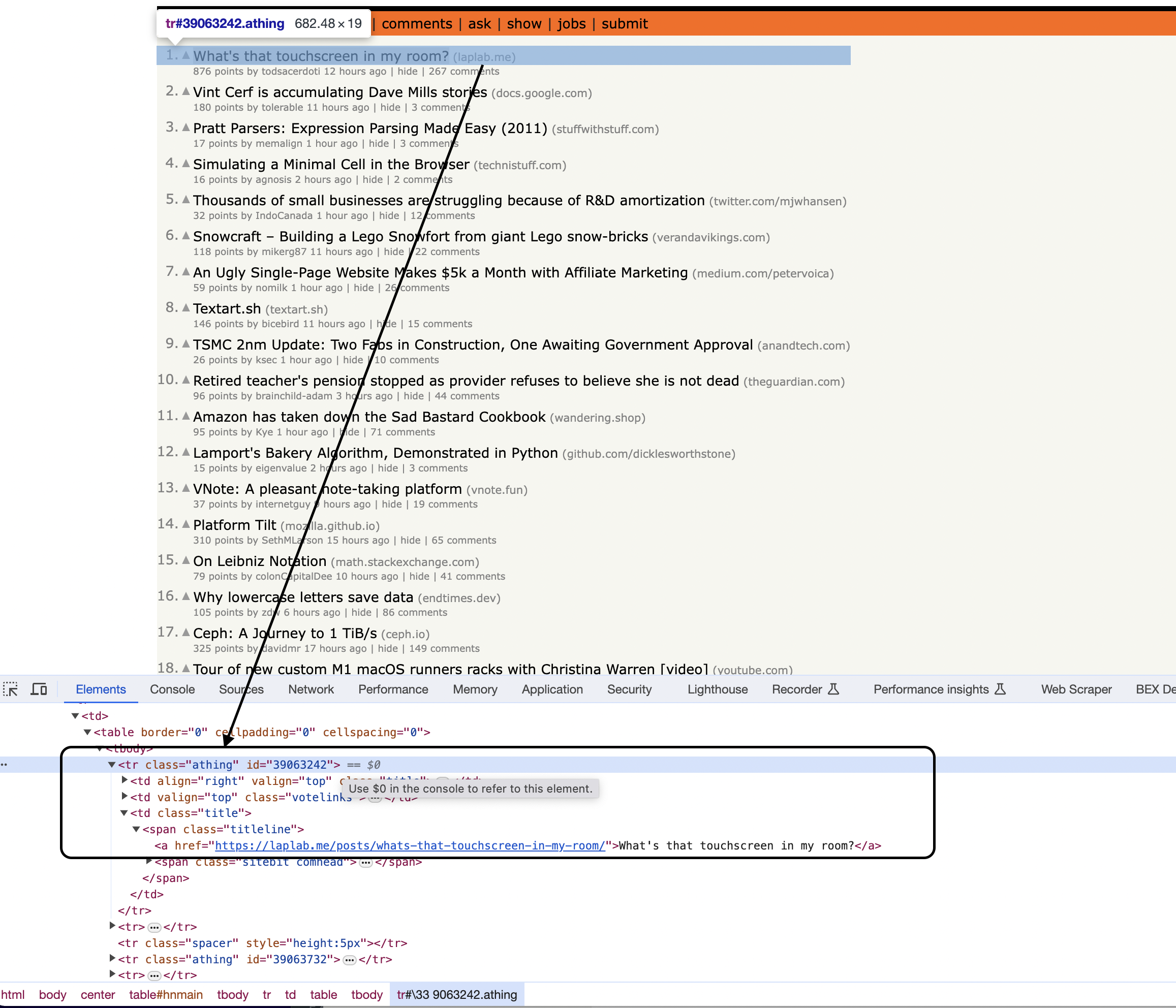

Inspecting the page

You can notice that the items are housed inside a With the HTML document loaded, we next find all This gives us a list of elements containing each We initialize two variables to keep track of state as we parse each row: We loop through each row to identify article content: Inside this loop is the main parsing logic... We first check if a row has the "athing" class to determine if it is an article: If so, we save the row to Next, we check if the previous row type was an "article", indicating this is a details row: Inside the details row, we can now extract information from the article and details row since we have both available in Let's focus on how the title field is extracted... First, we select the Notice here we are using a CSS selector again, targeting the element by class name. If This drills into The URL extraction works similarly, getting the The same process is followed to extract points, author, comments etc. each into their own variables by selecting different elements from either the article or details row. Finally, we print the information: The other fields like points, author, and comments are extracted in the same way using Jsoup selectors targeting elements by class or tag name. After extracting article details, we reset We also include logic to detect and skip spacer rows: This checks for a particular And that covers the full logic to scrape article information from Hacker News using Jsoup selectors! Here is the complete runnable code again for reference: This is great as a learning exercise but it is easy to see that even the proxy server itself is prone to get blocked as it uses a single IP. In this scenario where you may want a proxy that handles thousands of fetches every day using a professional rotating proxy service to rotate IPs is almost a must. Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms. Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly. Hundreds of our customers have successfully solved the headache of IP blocks with a simple API. The whole thing can be accessed by a simple API like below in any programming language. In fact, you don't even have to take the pain of loading Puppeteer as we render Javascript behind the scenes and you can just get the data and parse it any language like Node, Puppeteer or PHP or using any framework like Scrapy or Nutch. In all these cases you can just call the URL with render support like so: We have a running offer of 1000 API calls completely free. Register and get your free API Key.

Get HTML from any page with a simple API call. We handle proxy rotation, browser identities, automatic retries, CAPTCHAs, JavaScript rendering, etc automatically for you

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com" <!doctype html> Enter your email below to claim your free API key: tag with the class athing

table rows on the page using a CSS selector: val rows = doc.select("tr")

to iterate through later. Tracking State

var currentArticle: org.jsoup.nodes.Element = null

var currentRowType: String = null

Iterating Rows

for (row <- rows) {

// parsing logic

}

Identifying Article Rows

if (row.hasClass("athing")) {

currentArticle = row

currentRowType = "article"

}

Identifying Details Rows

} else if (currentRowType == "article") {

// extract details for current article

}

Extracting Article Data

// Extract information from current article and details row

val titleElem = currentArticle.select("span.titleline")

if (titleElem != null && titleElem.size() > 0) {

val articleTitle = titleElem.select("a").text()

val articleUrl = titleElem.select("a").attr("href")

// extract other fields like points, author etc.

println(articleTitle)

println(articleUrl)

}

val titleElem = currentArticle.select("span.titleline")

val articleTitle = titleElem.select("a").text()

println("Title: " + articleTitle)

println("URL: " + articleUrl)

// etc.

Resetting State

currentArticle = null

currentRowType = null

Skipping Spacer Rows

} else if (row.attr("style") == "height:5px") {

// skip spacer row

}

Full Code

import org.jsoup.Jsoup

object HackerNewsScraper {

def main(args: Array[String]): Unit = {

// Define the URL of the Hacker News homepage

val url = "https://news.ycombinator.com/"

// Send a GET request to the URL and parse the HTML content

val doc = Jsoup.connect(url).get()

// Find all rows in the table

val rows = doc.select("tr")

// Initialize variables to keep track of the current article and row type

var currentArticle: org.jsoup.nodes.Element = null

var currentRowType: String = null

// Iterate through the rows to scrape articles

for (row <- rows) {

if (row.hasClass("athing")) {

// This is an article row

currentArticle = row

currentRowType = "article"

} else if (currentRowType == "article") {

// This is the details row

if (currentArticle != null) {

// Extract information from the current article and details row

val titleElem = currentArticle.select("span.titleline")

if (titleElem != null && titleElem.size() > 0) {

val articleTitle = titleElem.select("a").text() // Get the text of the anchor element

val articleUrl = titleElem.select("a").attr("href") // Get the href attribute of the anchor element

val subtext = row.select("td.subtext")

val points = subtext.select("span.score").text()

val author = subtext.select("a.hnuser").text()

val timestamp = subtext.select("span.age").attr("title")

val commentsElem = subtext.select("a:contains(comments)")

val comments = if (commentsElem != null && commentsElem.size() > 0) commentsElem.text() else "0"

// Print the extracted information

println("Title: " + articleTitle)

println("URL: " + articleUrl)

println("Points: " + points)

println("Author: " + author)

println("Timestamp: " + timestamp)

println("Comments: " + comments)

println("-" * 50) // Separating articles

}

}

// Reset the current article and row type

currentArticle = null

currentRowType = null

} else if (row.attr("style") == "height:5px") {

// This is the spacer row, skip it

// do nothing

}

}

}

}curl "<http://api.proxiesapi.com/?key=API_KEY&render=true&url=https://example.com>"

Browse by language:

The easiest way to do Web Scraping

Try ProxiesAPI for free

<html>

<head>

<title>Example Domain</title>

<meta charset="utf-8" />

<meta http-equiv="Content-type" content="text/html; charset=utf-8" />

<meta name="viewport" content="width=device-width, initial-scale=1" />

...Don't leave just yet!