Yelp is a popular platform for discovering and reviewing local businesses. Often people want to extract data from Yelp listings for further analysis - number of reviews, ratings, price ranges etc.

This guide will walk you through a full code sample for scraping key data points from Yelp listings using Kotlin. We'll specifically focus on restaurants in San Francisco.

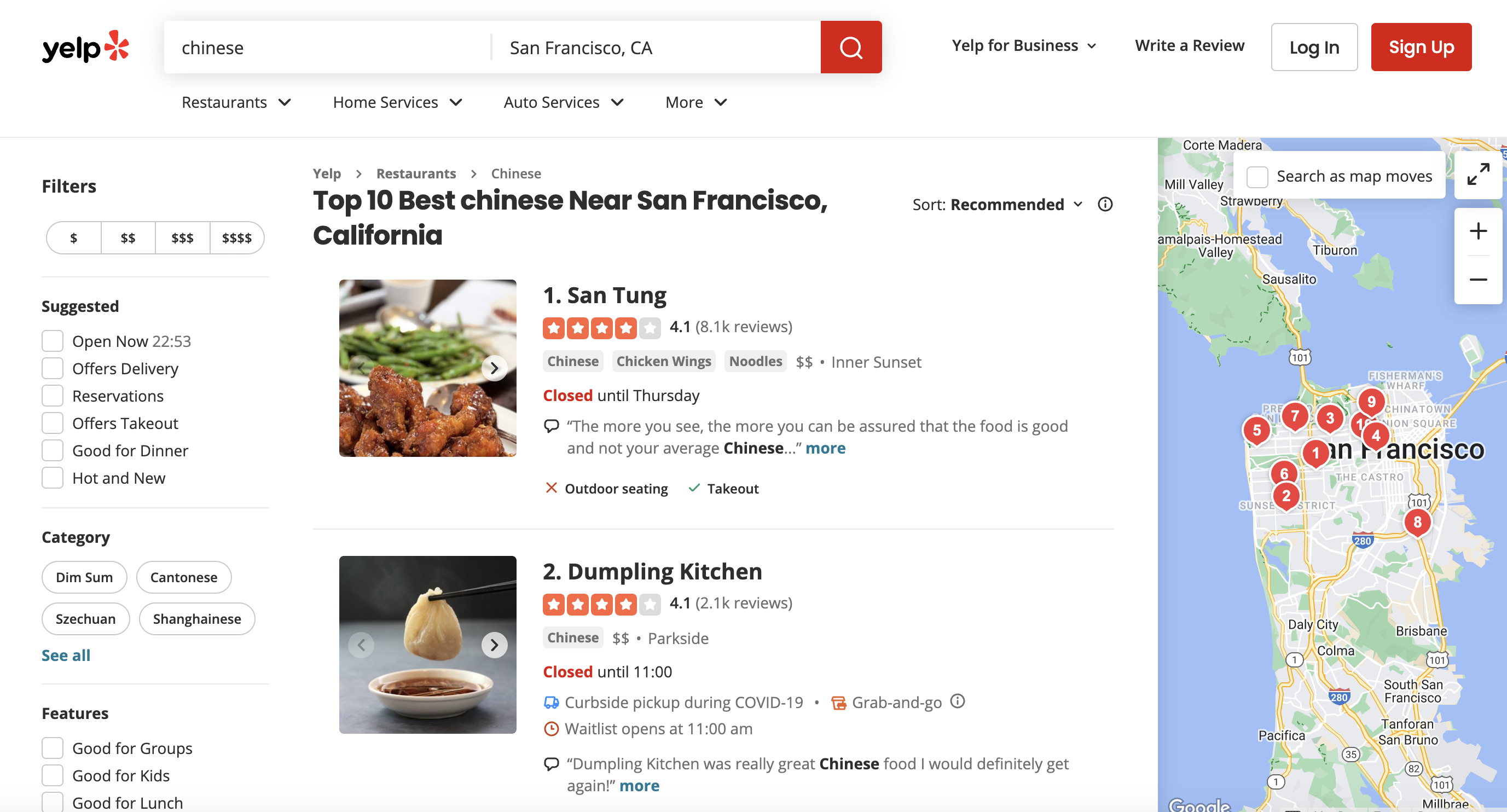

This is the page we are talking about

Importing Required Libraries

We need a few libraries to send HTTP requests and parse the responses:

import okhttp3.OkHttpClient

import okhttp3.Request

import org.jsoup.Jsoup

OkHttpClient sends requests and gets responses. Request constructs the API calls. Jsoup parses HTML from the responses.

Constructing the Yelp URL

Let's search for Chinese restaurants in San Francisco:

val url = "<https://www.yelp.com/search?find_desc=chinese&find_loc=San+Francisco%2C+CA>"

This URL will display our listings.

Encoding the URL

We need to encode this URL before sending to the API:

val encodedUrl = java.net.URLEncoder.encode(url, "UTF-8")

Encoding converts characters like spaces into %20. This format is required for sending data over HTTP.

Setting Request Headers

We need to mimic a browser's headers to bypass bot detection:

val headers = mapOf(

"User-Agent" to "Mozilla/5.0...",

"Accept-Language" to "en-US,en;q=0.5",

"Accept-Encoding" to "gzip, deflate, br",

"Referer" to "<https://www.google.com/>"

)

The headers make it seem like a real browser is sending the request.

Creating the HTTP Client

Let's create the OkHttpClient:

val client = OkHttpClient()

This will handle sending requests and receiving responses for us.

Building the API Request

We'll use ProxiesAPI to proxy our requests:

val apiKey = "YOUR_AUTH_KEY"

val apiEndpoint = "<http://api.proxiesapi.com/?premium=true&auth_key=$apiKey&url=$encodedUrl>"

val request = Request.Builder()

.url(apiEndpoint)

.headers(headers)

.build()

ProxiesAPI allows bypassing Yelp's bot protection with residential proxies. We pass auth_key, encoded URL and headers to build the request.

Sending the Request

Let's send an HTTP GET request:

val response = client.newCall(request).execute()

This will send the request and store the API response.

Parsing the Response with Jsoup

We can extract data by parsing the HTML:

val html = response.body?.string()

val document = Jsoup.parse(html)

Jsoup takes the HTML content and creates a document model for traversal.

Extracting Listing Data

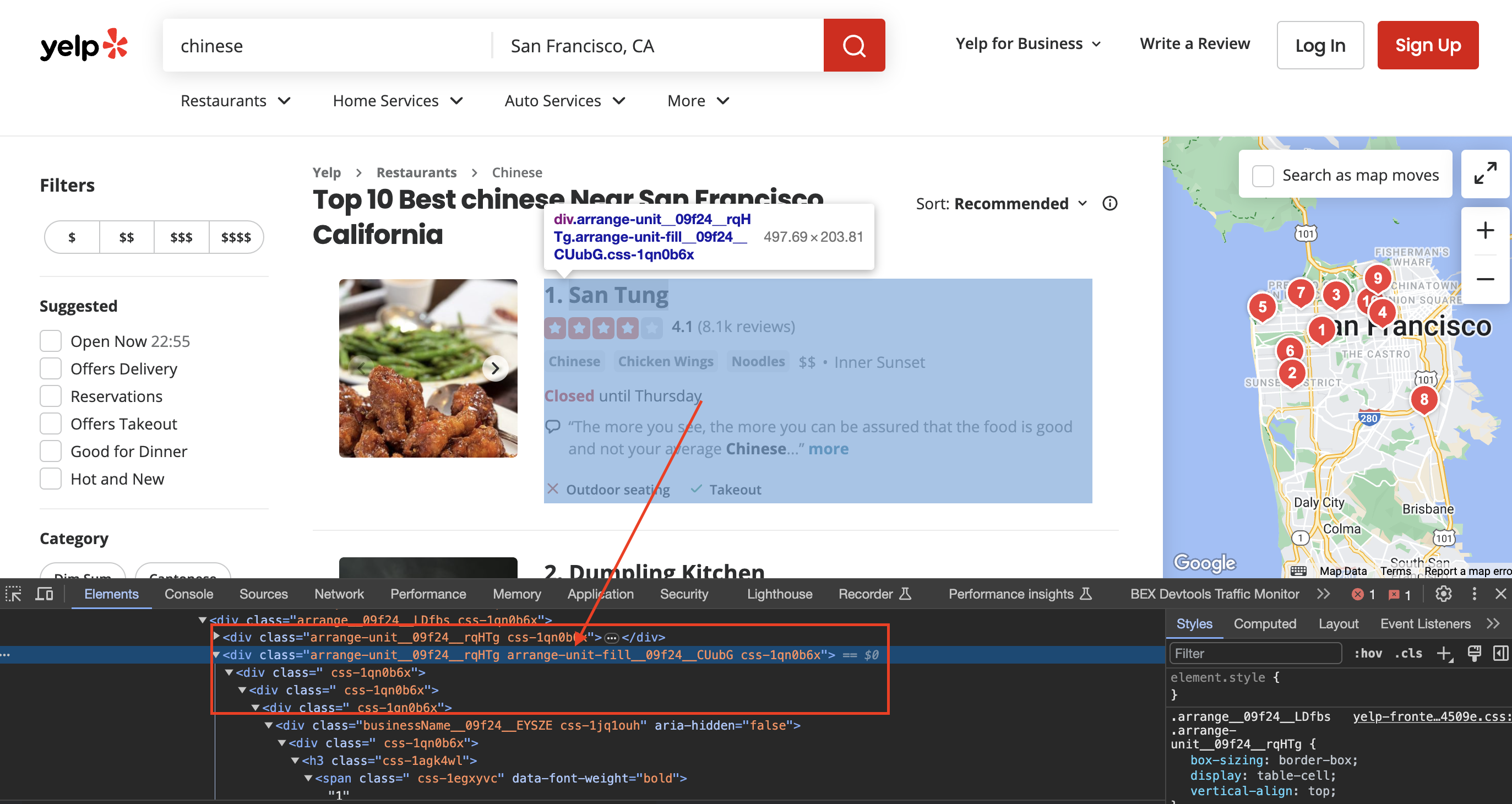

Inspecting the page

When we inspect the page we can see that the div has classes called arrange-unit__09f24__rqHTg arrange-unit-fill__09f24__CUubG css-1qn0b6x

Let's find all listings:

val listings = document.select("div.arrange-unit__09f24__rqHTg.arrange-unit-fill__09f24__CUubG.css-1qn0b6x")

This selector specifically targets the containers of individual listings on the page.

We loop through each listing container:

for (listing in listings) {

// Extract data from each listing

}

Inside, we can use additional selectors to extract information:

Business Name

val businessNameElem = listing.selectFirst("a.css-19v1rkv")

val businessName = businessNameElem?.text() ?: "N/A"

This selector finds the business name anchor tag. We fallback to "N/A" if not found.

Rating

val ratingElem = listing.selectFirst("span.css-gutk1c")

val rating = ratingElem?.text() ?: "N/A"

The rating is inside a specific span. Again fallback to "N/A".

And similarly for price range, number of reviews, location etc. Each data is wrapped in specific tags we target with selectors.

Finally, we print out the extracted information:

println("Business Name: $businessName")

println("Rating: $rating")

// etc

Full code:

import okhttp3.OkHttpClient

import okhttp3.Request

import org.jsoup.Jsoup

fun main() {

// URL of the Yelp search page

val url = "https://www.yelp.com/search?find_desc=chinese&find_loc=San+Francisco%2C+CA"

// Encode the URL

val encodedUrl = java.net.URLEncoder.encode(url, "UTF-8")

// API URL with the encoded Walmart URL

val apiKey = "YOUR_AUTH_KEY"

val apiEndpoint = "http://api.proxiesapi.com/?premium=true&auth_key=$apiKey&url=$encodedUrl"

// Define user-agent header to simulate a browser request

val headers = mapOf(

"User-Agent" to "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36",

"Accept-Language" to "en-US,en;q=0.5",

"Accept-Encoding" to "gzip, deflate, br",

"Referer" to "https://www.google.com/"

)

// Create an OkHttpClient instance

val client = OkHttpClient()

// Build the request with headers

val request = Request.Builder()

.url(apiEndpoint)

.headers(headers.map { (key, value) -> key to value })

.build()

// Send an HTTP GET request

val response = client.newCall(request).execute()

// Check if the request was successful (status code 200)

if (response.isSuccessful) {

// Parse the HTML content of the page using Jsoup

val html = response.body?.string()

val document = Jsoup.parse(html)

// Find all the listings

val listings = document.select("div.arrange-unit__09f24__rqHTg.arrange-unit-fill__09f24__CUubG.css-1qn0b6x")

println(listings.size)

// Loop through each listing and extract information

for (listing in listings) {

// Assuming you've already extracted the information as shown in your Python code

// Check if business name exists

val businessNameElem = listing.selectFirst("a.css-19v1rkv")

val businessName = businessNameElem?.text() ?: "N/A"

// If business name is not "N/A," then print the information

if (businessName != "N/A") {

// Check if rating exists

val ratingElem = listing.selectFirst("span.css-gutk1c")

val rating = ratingElem?.text() ?: "N/A"

// Check if price range exists

val priceRangeElem = listing.selectFirst("span.priceRange__09f24__mmOuH")

val priceRange = priceRangeElem?.text() ?: "N/A"

// Find all <span> elements inside the listing

val spanElements = listing.select("span.css-chan6m")

// Initialize num_reviews and location as "N/A"

var numReviews = "N/A"

var location = "N/A"

// Check if there are at least two <span> elements

if (spanElements.size >= 2) {

// The first <span> element is for Number of Reviews

numReviews = spanElements[0].text().trim()

// The second <span> element is for Location

location = spanElements[1].text().trim()

} else if (spanElements.size == 1) {

// If there's only one <span> element, check if it's for Number of Reviews or Location

val text = spanElements[0].text().trim()

if (text.matches("\\d+".toRegex())) {

numReviews = text

} else {

location = text

}

}

// Print the extracted information

println("Business Name: $businessName")

println("Rating: $rating")

println("Number of Reviews: $numReviews")

println("Price Range: $priceRange")

println("Location: $location")

println("=" * 30)

}

}

} else {

println("Failed to retrieve data. Status Code: ${response.code}")

}

}