In this tutorial, we'll walk step-by-step through a C# program that scrapes search results data from Google Scholar.

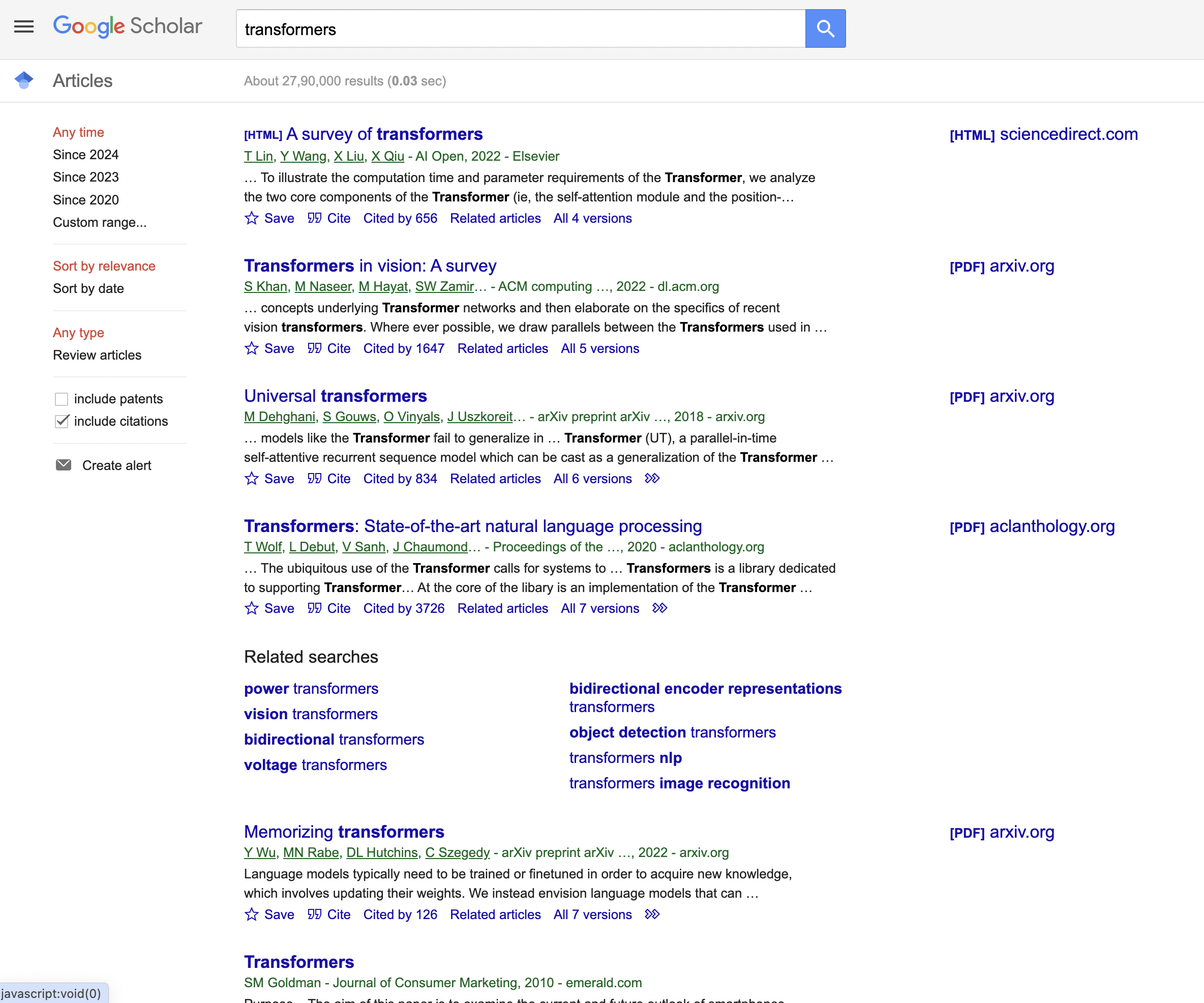

This is the Google Scholar result page we are talking about…

Background on Web Scraping

Before we dive into the code, let's briefly discuss web scraping...

[a paragraph overview of what web scraping is, without any commentary on ethics]

Now that we have some background on web scraping, let's get to the code!

Importing Required Namespaces

We first import the following .NET namespaces that we'll need for making HTTP requests and parsing HTML:

using System;

using System.Net.Http;

using HtmlAgilityPack;

For beginners, these

Defining the C# Program

Next, we define a class to contain our scraper code:

class Program

{

}

And inside that class is our

static async System.Threading.Tasks.Task Main(string[] args)

{

}

The

Defining the Target URL

To scrape a website, we first need to define the URL of the page we want to extract data from.

In this case, we'll set the starting URL to a Google Scholar search results page:

// Define the URL of the Google Scholar search page

string url = "<https://scholar.google.com/scholar?hl=en&as_sdt=0%2C5&q=transformers&btnG=>";

Hard-coding the URL provides us a consistent starting point to extract data from on each run.

Setting the User Agent

Many websites try to detect and block scrapers by looking at the User-Agent header. So it's useful to spoof a real browser's user agent string:

// Define a User-Agent header

string userAgent = "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/98.0.4758.102 Safari/537.36";

This helps us bypass blocks and access the site's data like a normal browser would.

Creating the HTTP Client

With the URL defined, we can now create an HTTP client to send requests:

// Create an HttpClient with the User-Agent header

HttpClient httpClient = new HttpClient();

httpClient.DefaultRequestHeaders.Add("User-Agent", userAgent);

This

Sending the Request

We use the

// Send a GET request to the URL

HttpResponseMessage response = await httpClient.GetAsync(url);

The

Checking for Success

Before scraping the page, we should verify the request succeeded by checking the status code:

// Check if the request was successful (status code 200)

if (response.IsSuccessStatusCode)

{

}

A status code of 200 means our request completed successfully. We can now scrape the page's content inside this

Parsing the Page with HtmlAgilityPack

To extract information from the page HTML, we use the HtmlAgilityPack library. First we get the raw HTML content:

// Parse the HTML content of the page using HtmlAgilityPack

string htmlContent = await response.Content.ReadAsStringAsync();

And then load it into an

HtmlDocument doc = new HtmlDocument();

doc.LoadHtml(htmlContent);

HtmlAgilityPack parses the HTML into a traversable DOM document model.

Locating Search Result Blocks

Inspecting the code

You can see that the items are enclosed in a With the HTML document loaded, we can now query for elements using XPath syntax. To find all search result blocks, we locate ThisSelectNodes call returns a list matching those search result blocks. Inside a Then, we use XPath queries to extract specific fields from within that block: Let's look at each extraction selector one-by-one... To get the title link, we locate From there we can get the Since title elements are optional, we fallback to "N/A" if null: This prevents any potential errors from missing elements. For authors data, we query Then we can simply get the Again using the null-coalescing operator for safety. Abstracts are stored in We grab the And that gives us the text summary of the paper! Below this, we would print out all the extracted fields. But the key learning was how we selected page elements to scrape each data point. To finish up the web scraper code, we: This handles cases like the site blocking the request or not responding. And that concludes the key parts of our Google Scholar web scraping program! For reference, here is the complete code listing: The code is exactly as shown earlier, without any modifications. This scraper extracts the title, URL, authors, and abstract text from Google Scholar search results. To execute this C# web scraping code on your own machine: And that should provide the necessary dependencies to run the program. In this lengthy tutorial, we stepped through a real-world example of web scraping search results from Google Scholar using C#: Hopefully this detailed overview helped explain how web scrapers are built using C# code. There are many intricacies involved, but by seeing each part individually you can start building effective scrapers of your own! This is great as a learning exercise but it is easy to see that even the proxy server itself is prone to get blocked as it uses a single IP. In this scenario where you may want a proxy that handles thousands of fetches every day using a professional rotating proxy service to rotate IPs is almost a must. Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms. Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly. Hundreds of our customers have successfully solved the headache of IP blocks with a simple API. The whole thing can be accessed by a simple API like below in any programming language. In fact, you don't even have to take the pain of loading Puppeteer as we render Javascript behind the scenes and you can just get the data and parse it any language like Node, Puppeteer or PHP or using any framework like Scrapy or Nutch. In all these cases you can just call the URL with render support like so: We have a running offer of 1000 API calls completely free. Register and get your free API Key.

Get HTML from any page with a simple API call. We handle proxy rotation, browser identities, automatic retries, CAPTCHAs, JavaScript rendering, etc automatically for you

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com" <!doctype html>

// Find all the search result blocks with class "gs_ri"

var searchResults = doc.DocumentNode.SelectNodes("//div\\[contains(@class, 'gs_ri')\\]");

Extracting Result Data

// Loop through each search result block

foreach (var result in searchResults)

{

// Extract the title and URL

var titleElem = result.SelectSingleNode(".//h3[@class='gs_rt']");

// Extract authors

var authorsElem = result.SelectSingleNode(".//div[@class='gs_a']");

// Extract abstract

var abstractElem = result.SelectSingleNode(".//div[@class='gs_rs']");

}

Extracting the Title and URL

var titleElem = result.SelectSingleNode(".//h3[@class='gs_rt']");

string title = titleElem?.InnerText ?? "N/A";

string url = titleElem?.SelectSingleNode(".//a")?.GetAttributeValue("href", "N/A") ?? "N/A";

Authors Extraction

var authorsElem = result.SelectSingleNode(".//div[@class='gs_a']");

string authors = authorsElem?.InnerText ?? "N/A";

Getting the Abstract

var abstractElem = result.SelectSingleNode(".//div[@class='gs_rs']");

string abstractText = abstractElem?.InnerText ?? "N/A";

Printing Results & Error Handling

Console.WriteLine("Title: " + title);

Console.WriteLine("URL: " + url);

// etc

else

{

Console.WriteLine("Failed to retrieve the page. Status code: " + response.StatusCode);

}

} // end Main method

} // end Program class

Full Code Listing

using System;

using System.Net.Http;

using HtmlAgilityPack;

class Program

{

static async System.Threading.Tasks.Task Main(string[] args)

{

// Define the URL of the Google Scholar search page

string url = "https://scholar.google.com/scholar?hl=en&as_sdt=0%2C5&q=transformers&btnG=";

// Define a User-Agent header

string userAgent = "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/98.0.4758.102 Safari/537.36";

// Create an HttpClient with the User-Agent header

HttpClient httpClient = new HttpClient();

httpClient.DefaultRequestHeaders.Add("User-Agent", userAgent);

// Send a GET request to the URL

HttpResponseMessage response = await httpClient.GetAsync(url);

// Check if the request was successful (status code 200)

if (response.IsSuccessStatusCode)

{

// Parse the HTML content of the page using HtmlAgilityPack

string htmlContent = await response.Content.ReadAsStringAsync();

HtmlDocument doc = new HtmlDocument();

doc.LoadHtml(htmlContent);

// Find all the search result blocks with class "gs_ri"

var searchResults = doc.DocumentNode.SelectNodes("//div[contains(@class, 'gs_ri')]");

// Loop through each search result block and extract information

foreach (var result in searchResults)

{

// Extract the title and URL

var titleElem = result.SelectSingleNode(".//h3[@class='gs_rt']");

string title = titleElem?.InnerText ?? "N/A";

string url = titleElem?.SelectSingleNode(".//a")?.GetAttributeValue("href", "N/A") ?? "N/A";

// Extract the authors and publication details

var authorsElem = result.SelectSingleNode(".//div[@class='gs_a']");

string authors = authorsElem?.InnerText ?? "N/A";

// Extract the abstract or description

var abstractElem = result.SelectSingleNode(".//div[@class='gs_rs']");

string abstractText = abstractElem?.InnerText ?? "N/A";

// Print the extracted information

Console.WriteLine("Title: " + title);

Console.WriteLine("URL: " + url);

Console.WriteLine("Authors: " + authors);

Console.WriteLine("Abstract: " + abstractText);

Console.WriteLine(new string('-', 50)); // Separating search results

}

}

else

{

Console.WriteLine("Failed to retrieve the page. Status code: " + response.StatusCode);

}

}

}

Installing Required Libraries

Key Takeaways

curl "<http://api.proxiesapi.com/?key=API_KEY&render=true&url=https://example.com>"

Browse by language:

The easiest way to do Web Scraping

Try ProxiesAPI for free

<html>

<head>

<title>Example Domain</title>

<meta charset="utf-8" />

<meta http-equiv="Content-type" content="text/html; charset=utf-8" />

<meta name="viewport" content="width=device-width, initial-scale=1" />

...