Google Scholar is an invaluable resource for researching academic papers and articles across disciplines. The search engine provides detailed information on citations, related works, excerpts, and more for each result. However, there is no official API for programmatically accessing this data.

In this article, we'll use Rust to scrape and extract key fields from Google Scholar search result pages. Specifically, we'll cover:

We assume some prior experience with Rust.

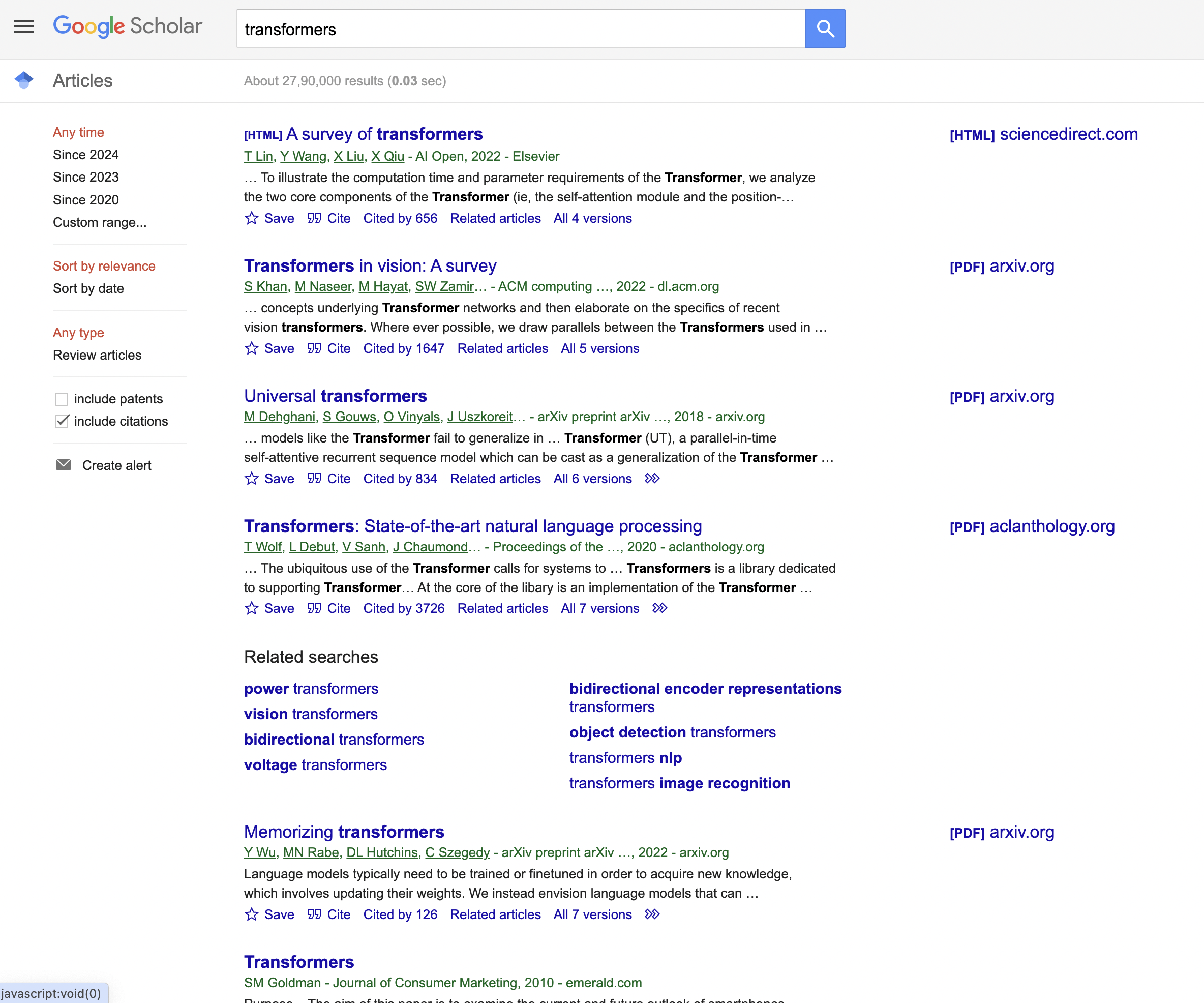

This is the Google Scholar result page we are talking about…

Let's get started!

Installation

First, make sure Rust is installed on your system:

rustup update

Next, we need the

cargo add reqwest

cargo add select

Import these crates in your Rust code, along with std::io:

extern crate reqwest;

extern crate select;

Now we're ready to scrape!

Sending the Request

We first define the URL of a Google Scholar search results page to scrape:

let url = "<https://scholar.google.com/scholar?hl=en&as_sdt=0%2C5&q=transformers&btnG=>";

Note this exact URL string including search parameters - we don't want to modify any literals here.

Next we create a reqwest client and send a GET request:

let client = reqwest::blocking::Client::new();

let res = client.get(url)

.header("User-Agent", "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/98.0.4758.102 Safari/537.36")

.send();

We spoof a Chrome User-Agent string to avoid bot detection. Now we can check the response status:

match res {

Ok(response) => {

if response.status().is_success() {

// Parse page

}

else {

// Handle error

}

}

Err(err) => {

// Network error

}

}

Statuses like 200 mean success. Anything else indicates a failure to retrieve the page content that needs handling.

Parsing the Page

With a successful response, we access the HTML body:

let body = response.text().unwrap();

We pass this to the

let document = Document::from(body);

Now

Extracting Title and URL

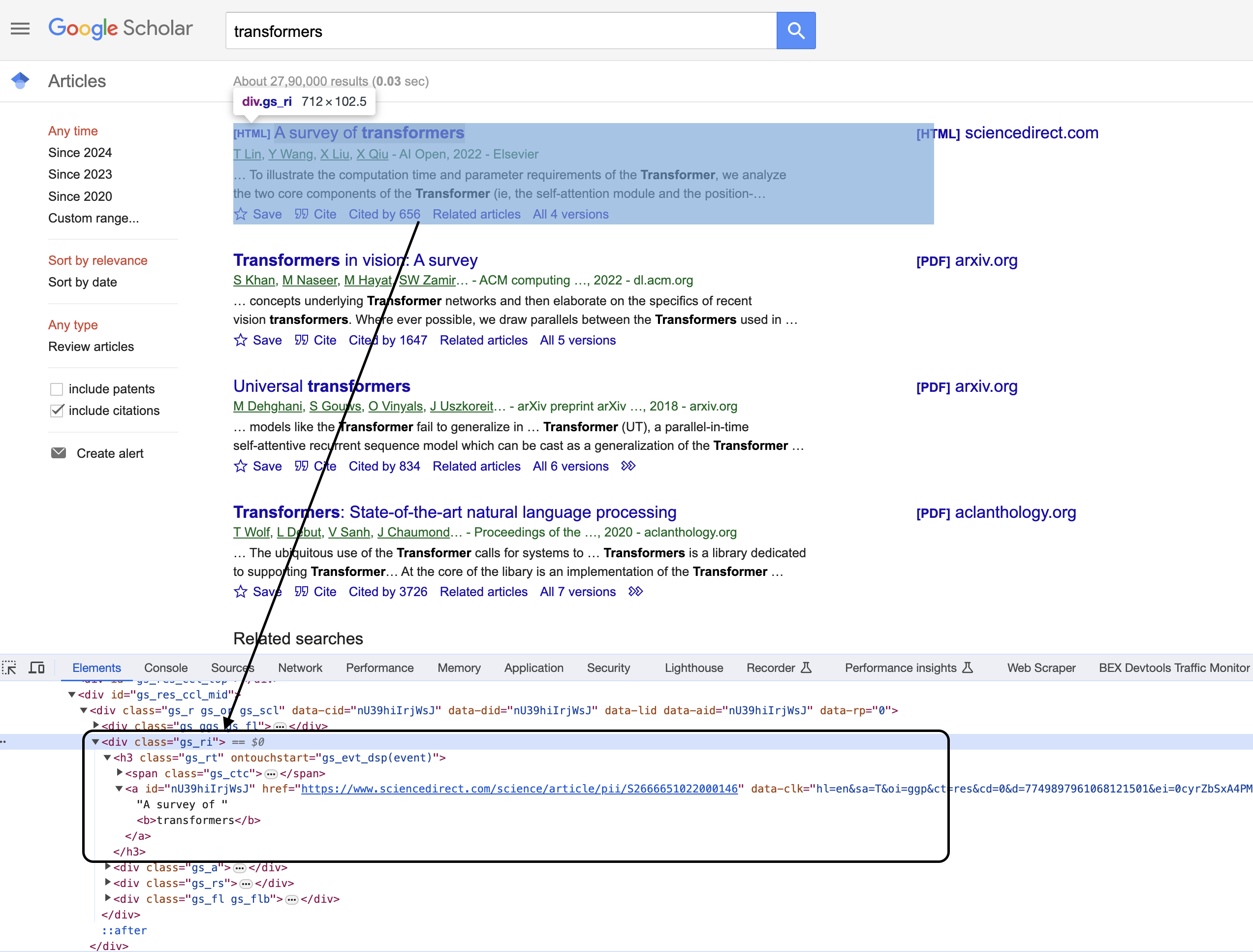

Inspecting the code

You can see that the items are enclosed in a Let's start with the title and URL using this selector: This finds all elements with CSS class Inside the loop, we extract the We map this to the text content: If no h3 tag matched, default to "N/A". Finally, extract href attribute for the URL: And we have title and URL! We would print these fields out. For authors, we select the Then map to text as before: If no author, again default to "N/A". Finally, for the excerpt/abstract: We target the And that covers scraping all the key fields from a Google Scholar search result! Below the extraction logic, we print out each field value for convenience: The full sequence extracts, processes, and prints the title, URL, authors list, and abstract snippet for each of the top 10 search result blocks from Google Scholar with Rust! While basic, this demonstrates core techniques for practical web scraping like: There is certainly more to cover, but this foundations should enable you to get started with scraping projects of your own! For easy reference, here is the complete Rust code covered in this article: This is great as a learning exercise but it is easy to see that even the proxy server itself is prone to get blocked as it uses a single IP. In this scenario where you may want a proxy that handles thousands of fetches every day using a professional rotating proxy service to rotate IPs is almost a must. Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms. Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly. Hundreds of our customers have successfully solved the headache of IP blocks with a simple API. The whole thing can be accessed by a simple API like below in any programming language. In fact, you don't even have to take the pain of loading Puppeteer as we render Javascript behind the scenes and you can just get the data and parse it any language like Node, Puppeteer or PHP or using any framework like Scrapy or Nutch. In all these cases you can just call the URL with render support like so: We have a running offer of 1000 API calls completely free. Register and get your free API Key.

Get HTML from any page with a simple API call. We handle proxy rotation, browser identities, automatic retries, CAPTCHAs, JavaScript rendering, etc automatically for you

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com" <!doctype html>

for node in document.find(Class("gs_ri")) {

}

let title_elem = node.find(Name("h3")).next();

let title = title_elem.map(|elem| elem.text())

.unwrap_or("N/A".to_string());

let url = title_elem.and_then(|elem| elem.attr("href"))

.unwrap_or("N/A".to_string());

Extracting Authors

let authors_elem = node.find(Class("gs_a")).next();

let authors = authors_elem.map(|elem| elem.text())

.unwrap_or("N/A".to_string());

Getting the Abstract

let abstract_elem = node.find(Class("gs_rs")).next();

let abstract = abstract_elem.map(|elem| elem.text())

.unwrap_or("N/A".to_string());

Bringing the Data Together

println!("Title: {}", title);

println!("URL: {}", url);

println!("Authors: {}", authors);

println!("Abstract: {}", abstract);

Full Code

extern crate reqwest;

extern crate select;

use select::document::Document;

use select::node::Node;

use select::predicate::{Class, Name};

fn main() {

// Define the URL of the Google Scholar search page

let url = "https://scholar.google.com/scholar?hl=en&as_sdt=0%2C5&q=transformers&btnG=";

// Send a GET request to the URL

let client = reqwest::blocking::Client::new();

let res = client.get(url)

.header("User-Agent", "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/98.0.4758.102 Safari/537.36")

.send();

// Check if the request was successful

match res {

Ok(response) => {

if response.status().is_success() {

// Parse the HTML content of the page

let body = response.text().unwrap();

let document = Document::from(body);

// Find all the search result blocks with class "gs_ri"

for node in document.find(Class("gs_ri")) {

// Extract the title and URL

let title_elem = node.find(Name("h3")).next();

let title = title_elem.map(|elem| elem.text()).unwrap_or("N/A".to_string());

let url = title_elem.and_then(|elem| elem.attr("href")).unwrap_or("N/A".to_string());

// Extract the authors and publication details

let authors_elem = node.find(Class("gs_a")).next();

let authors = authors_elem.map(|elem| elem.text()).unwrap_or("N/A".to_string());

// Extract the abstract or description

let abstract_elem = node.find(Class("gs_rs")).next();

let abstract = abstract_elem.map(|elem| elem.text()).unwrap_or("N/A".to_string());

// Print the extracted information

println!("Title: {}", title);

println!("URL: {}", url);

println!("Authors: {}", authors);

println!("Abstract: {}", abstract);

println!("{}", "-".repeat(50)); // Separating search results

}

} else {

println!("Failed to retrieve the page. Status code: {}", response.status());

}

}

Err(err) => {

println!("Error: {}", err);

}

}

}curl "<http://api.proxiesapi.com/?key=API_KEY&render=true&url=https://example.com>"

Browse by language:

The easiest way to do Web Scraping

Try ProxiesAPI for free

<html>

<head>

<title>Example Domain</title>

<meta charset="utf-8" />

<meta http-equiv="Content-type" content="text/html; charset=utf-8" />

<meta name="viewport" content="width=device-width, initial-scale=1" />

...