Introduction

In this article, we will take you through the process of scraping data from Wikipedia using C# and the HtmlAgilityPack library. Web scraping is a powerful technique for extracting information from websites, and it can be a valuable skill for data collection, analysis, and automation.

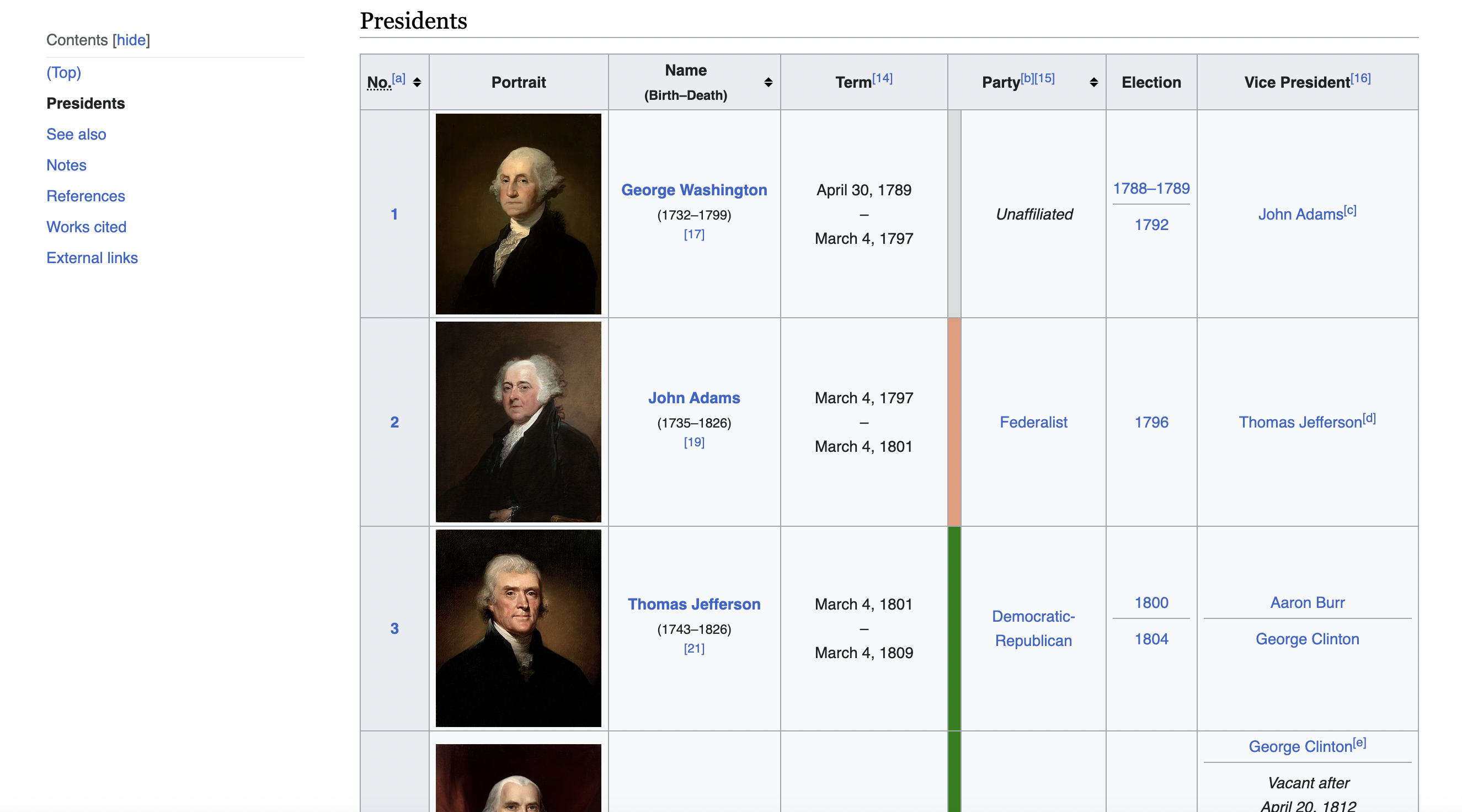

Scenario: Imagine you're working on a research project or just curious about the list of U.S. Presidents, and you want to collect data from Wikipedia to create your own dataset. We'll guide you through each step, providing explanations, tips, and tricks along the way.

This is the table we are talking about

Plan of Action

Step 1: Define the Goal

Step 2: Set Up Your Environment

Step 3: Choose the Website to Scrape

Step 4: Simulate a Browser Request

Step 5: Load the HTML Content

Step 6: Find the Data

Step 7: Extract and Store the Data

Step 8: Display the Scraped Data

Detailed Instructions

Now, let's dive into the details of each step.

Step 2: Set Up Your Environment

Before we start coding, make sure you have the following in place:

Step 4: Simulate a Browser Request

string userAgent = "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36";

In our code, we define a user-agent header. This header tells the web server that our request is coming from a web browser, making it less likely to be blocked.

Step 5: Load the HTML Content

var web = new HtmlWeb

{

UserAgent = userAgent

};

var doc = web.Load(url);

We use HtmlAgilityPack's

Step 6: Find the Data

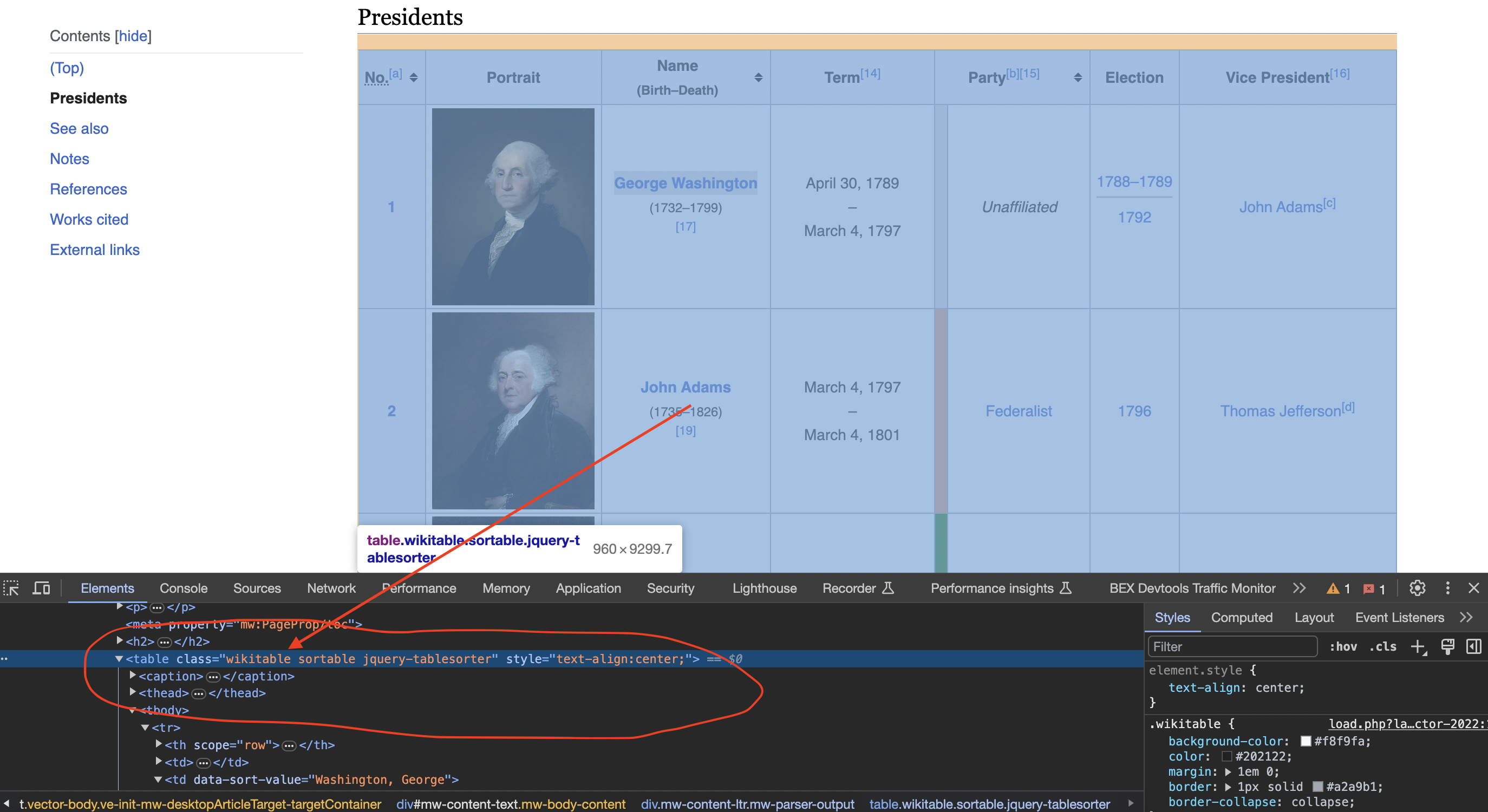

Inspecting the page

When we inspect the page we can see that the table has a class called wikitable and sortable

var table = doc.DocumentNode.SelectSingleNode("//table[@class='wikitable sortable']");

Here, we use XPath to locate the table with the class name 'wikitable sortable' on the page.

Step 7: Extract and Store the Data

var data = new List<List<string>>();

foreach (var row in table.SelectNodes("tr").Skip(1))

{

var columns = row.SelectNodes("td | th");

if (columns != null)

{

var row_data = new List<string>();

foreach (var col in columns)

{

row_data.Add(col.InnerText.Trim());

}

data.Add(row_data);

}

}

We iterate through the table rows and columns, extracting the data and storing it in a structured format (a list of lists).

Step 8: Display the Scraped Data

foreach (var president_data in data)

{

Console.WriteLine("President Data:");

Console.WriteLine("Number: " + president_data[0]);

Console.WriteLine("Name: " + president_data[2]);

Console.WriteLine("Term: " + president_data[3]);

Console.WriteLine("Party: " + president_data[5]);

Console.WriteLine("Election: " + president_data[6]);

Console.WriteLine("Vice President: " + president_data[7]);

Console.WriteLine();

}

We print the scraped data for all presidents to the console.

Practical Considerations and Challenges

Next Steps

Conclusion

Web scraping with C# and HtmlAgilityPack is a valuable skill for data enthusiasts and researchers. By following this step-by-step guide, you've learned how to extract data from Wikipedia. Remember to always scrape responsibly and ethically, respecting website policies and legal regulations.

Full Code: Here's the complete code for your reference:

using System;

using HtmlAgilityPack;

namespace WikipediaScraper

{

class Program

{

static void Main(string[] args)

{

// Define the URL of the Wikipedia page

string url = "https://en.wikipedia.org/wiki/List_of_presidents_of_the_United_States";

// Define a user-agent header to simulate a browser request

string userAgent = "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36";

// Create an HtmlWeb instance with the user-agent header

var web = new HtmlWeb

{

UserAgent = userAgent

};

// Load the HTML content of the page

var doc = web.Load(url);

// Check if the request was successful

if (doc != null)

{

// Find the table with the specified class name

var table = doc.DocumentNode.SelectSingleNode("//table[@class='wikitable sortable']");

if (table != null)

{

// Initialize empty lists to store the table data

var data = new System.Collections.Generic.List<System.Collections.Generic.List<string>>();

// Iterate through the rows of the table

foreach (var row in table.SelectNodes("tr").Skip(1)) // Skip the header row

{

var columns = row.SelectNodes("td | th");

if (columns != null)

{

// Extract data from each column and append it to the data list

var row_data = new System.Collections.Generic.List<string>();

foreach (var col in columns)

{

row_data.Add(col.InnerText.Trim());

}

data.Add(row_data);

}

}

// Print the scraped data for all presidents

foreach (var president_data in data)

{

Console.WriteLine("President Data:");

Console.WriteLine("Number: " + president_data[0]);

Console.WriteLine("Name: " + president_data[2]);

Console.WriteLine("Term: " + president_data[3]);

Console.WriteLine("Party: " + president_data[5]);

Console.WriteLine("Election: " + president_data[6]);

Console.WriteLine("Vice President: " + president_data[7]);

Console.WriteLine();

}

}

else

{

Console.WriteLine("Failed to find the table with the specified class name.");

}

}

else

{

Console.WriteLine("Failed to retrieve the web page.");

}

}

}

}

In more advanced implementations you will need to even rotate the User-Agent string so the website cant tell its the same browser!

If we get a little bit more advanced, you will realize that the server can simply block your IP ignoring all your other tricks. This is a bummer and this is where most web crawling projects fail.

Overcoming IP Blocks

Investing in a private rotating proxy service like Proxies API can most of the time make the difference between a successful and headache-free web scraping project which gets the job done consistently and one that never really works.

Plus with the 1000 free API calls running an offer, you have almost nothing to lose by using our rotating proxy and comparing notes. It only takes one line of integration to its hardly disruptive.

Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly.

Hundreds of our customers have successfully solved the headache of IP blocks with a simple API.

The whole thing can be accessed by a simple API like below in any programming language.

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com"We have a running offer of 1000 API calls completely free. Register and get your free API Key here.