In this article, we will learn how to use Javascript and the cheerio library to download all the images from a Wikipedia page.

—-

Overview

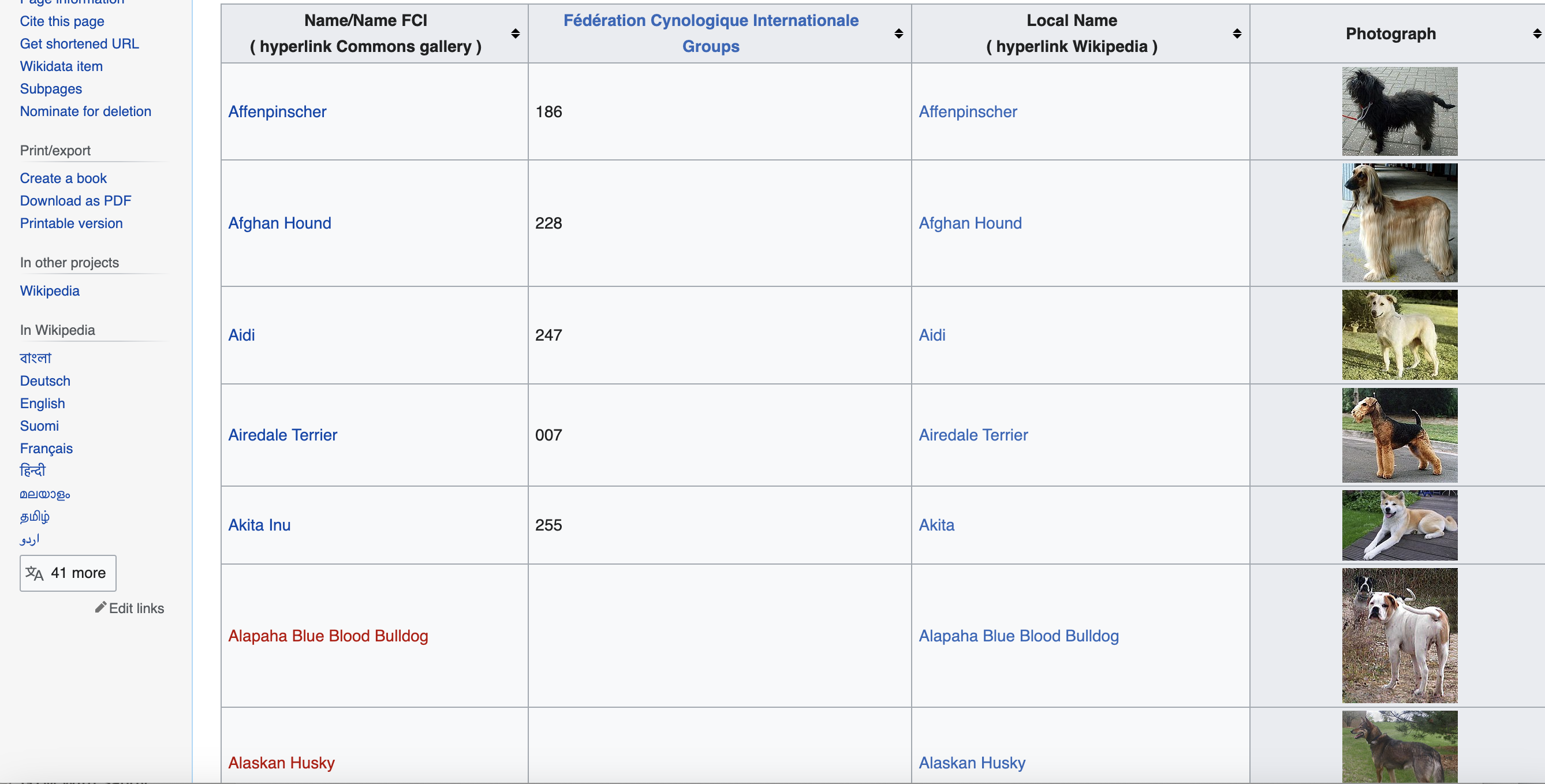

The goal is to extract the names, breed groups, local names, and image URLs for all dog breeds listed on this Wikipedia page. We will store the image URLs, download the images and save them to a local folder.

Here are the key steps we will cover:

- Import required modules

- Send HTTP request to fetch the Wikipedia page

- Parse the page HTML using cheerio

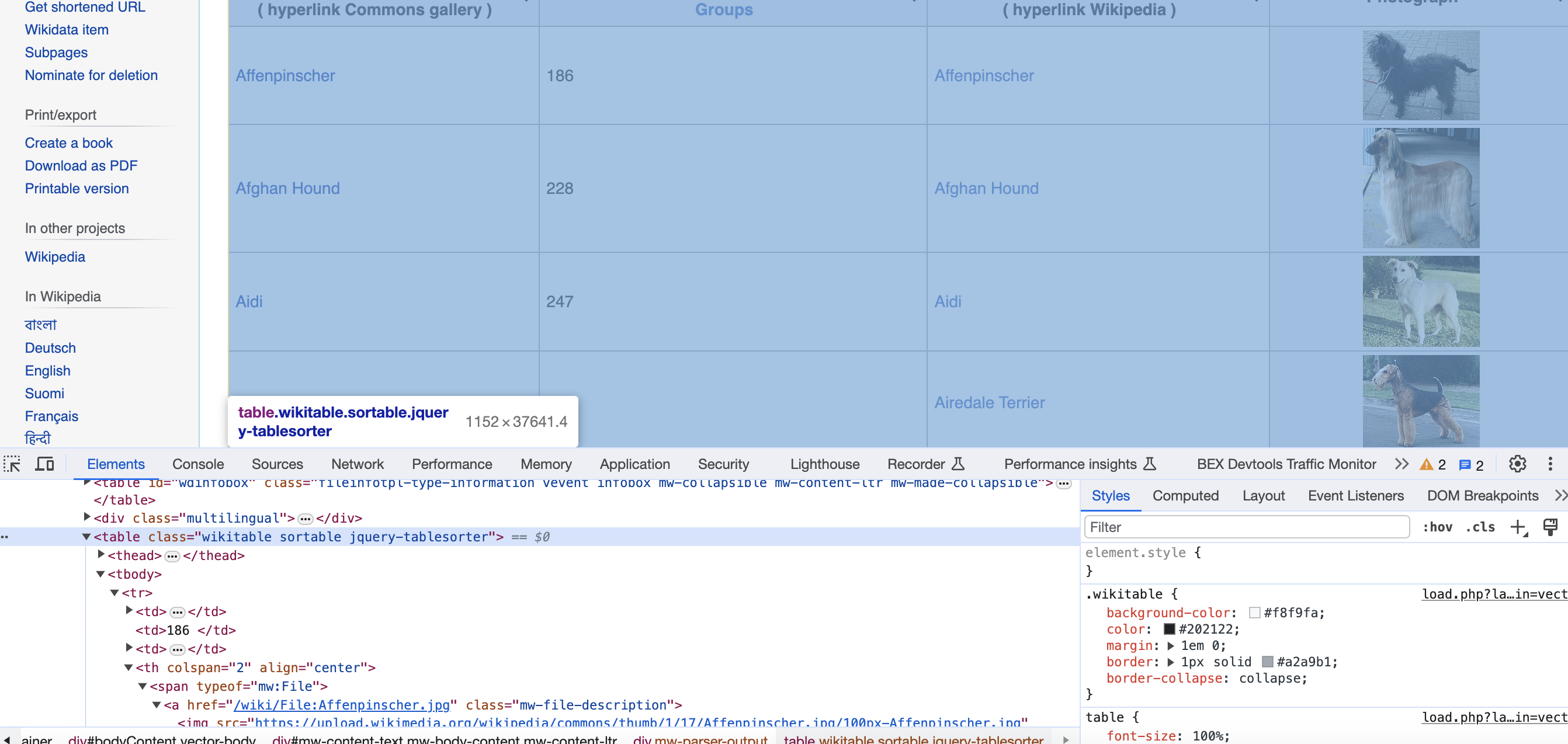

- Find the table with dog breed data using a CSS selector

- Iterate through the table rows

- Extract data from each column

- Download images and save locally

- Print/process extracted data

Let's go through each of these steps in detail.

Imports

We need these modules:

const request = require('request');

const cheerio = require('cheerio');

const fs = require('fs');

Send HTTP Request

To download the web page:

const url = '<https://commons.wikimedia.org/wiki/List_of_dog_breeds>';

request({

url: url,

headers: {

'User-Agent': 'Mozilla/5.0'

}

}, (error, response, html) => {

// Parse HTML

});

We make a GET request and provide a user-agent header.

Parse HTML

To parse the HTML:

const $ = cheerio.load(html);

The

Find Breed Table

We use a CSS selector to find the table element:

const table = $('table.wikitable.sortable');

This selects the We loop through the rows: We slice to skip the header row. Inside the loop, we extract the column data: We use To download and save images: We pipe the image stream directly to a file. We store the extracted data: The arrays can then be processed as needed. And that's it! Here is the full code: This provides a complete Javascript solution using cheerio to scrape data and images from HTML tables. The same approach can apply to many websites. While these examples are great for learning, scraping production-level sites can pose challenges like CAPTCHAs, IP blocks, and bot detection. Rotating proxies and automated CAPTCHA solving can help. Proxies API offers a simple API for rendering pages with built-in proxy rotation, CAPTCHA solving, and evasion of IP blocks. You can fetch rendered pages in any language without configuring browsers or proxies yourself. This allows scraping at scale without headaches of IP blocks. Proxies API has a free tier to get started. Check out the API and sign up for an API key to supercharge your web scraping. With the power of Proxies API combined with Python libraries like Beautiful Soup, you can scrape data at scale without getting blocked.

Get HTML from any page with a simple API call. We handle proxy rotation, browser identities, automatic retries, CAPTCHAs, JavaScript rendering, etc automatically for you

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com" <!doctype html> Enter your email below to claim your free API key: tag with classes

Iterate Through Rows

table.find('tr').slice(1).each((i, elem) => {

// Extract data

});

Extract Column Data

const cells = $(elem).find('td, th');

const name = $(cells[0]).find('a').text().trim();

const group = $(cells[1]).text().trim();

const localName = $(cells[2]).find('span').text().trim() || '';

const img = $(cells[3]).find('img');

const photograph = img.attr('src') || '';

Download Images

if (photograph) {

request(photograph).pipe(fs.createWriteStream(`dog_images/${name}.jpg`));

}

Store Extracted Data

names.push(name);

groups.push(group);

localNames.push(localName);

photographs.push(photograph);

// Imports

const request = require('request');

const cheerio = require('cheerio');

const fs = require('fs');

// Arrays to store data

let names = [];

let groups = [];

let localNames = [];

let photographs = [];

// Fetch HTML

const url = '<https://commons.wikimedia.org/wiki/List_of_dog_breeds>';

request({

url: url,

headers: {

'User-Agent': 'Mozilla/5.0'

}

}, (error, response, html) => {

// Load HTML

const $ = cheerio.load(html);

// Find table

const table = $('table.wikitable.sortable');

// Iterate rows

table.find('tr').slice(1).each((i, elem) => {

// Get cells

const cells = $(elem).find('td, th');

// Extract data

const name = $(cells[0]).find('a').text().trim();

const group = $(cells[1]).text().trim();

const localName = $(cells[2]).find('span').text().trim() || '';

const img = $(cells[3]).find('img');

const photograph = img.attr('src') || '';

// Download image

if (photograph) {

request(photograph).pipe(fs.createWriteStream(`dog_images/${name}.jpg`));

}

// Store data

names.push(name);

groups.push(group);

localNames.push(localName);

photographs.push(photograph);

});

});

Browse by tags:

Browse by language:

The easiest way to do Web Scraping

Try ProxiesAPI for free

<html>

<head>

<title>Example Domain</title>

<meta charset="utf-8" />

<meta http-equiv="Content-type" content="text/html; charset=utf-8" />

<meta name="viewport" content="width=device-width, initial-scale=1" />

...Don't leave just yet!