Web scraping is the process of extracting data from websites. This can be useful for gathering large amounts of data for analysis. Python is a popular language for web scraping due to its many scraping libraries and simple syntax. ChatGPT is an AI assistant that can be helpful for generating code and explanations for web scraping tasks. This article will provide an overview of web scraping in Python and how ChatGPT can assist.

Introduction to Web Scraping

Web scraping involves programmatically fetching data from websites. This is done by sending HTTP requests to the target site and parsing the HTML, XML or JSON response. Popular Python libraries for web scraping include:

The general workflow for a basic web scraper is:

- Send HTTP request to fetch page

- Parse text response and extract relevant data

- Store scraped data

- Repeat for other pages

This can be extended to scrape various data types, handle pagination, scrape JavaScript generated content, avoid detection etc.

ChatGPT for Web Scraping Help

ChatGPT is an AI assistant created by OpenAI to be helpful, harmless, and honest. It can generate natural language explanations and code for a variety of topics. For web scraping, some ways ChatGPT can help are:

Generating Explanations

If you are stuck on a web scraping task, ChatGPT can provide explanations of web scraping concepts or specifics for your use case. Some examples:

Writing Code Snippets

You can provide a description of what you want your code to do and have ChatGPT generate starter code snippets for you. For example:

Be sure to validate any code ChatGPT provides before using it.

Improving Your Prompts

If ChatGPT is not providing helpful responses, you can ask it to suggest ways to improve your prompt to get better results.

Asking Follow-up Questions

Engage in a back and forth conversation with ChatGPT to get explanations for any follow-up questions you have.

Explaining Errors

Share any errors you are getting and ask ChatGPT to explain the issue and how to fix it.

Web Scraping Example Using ChatGPT

Let's go through an example of web scraping a Wikipedia page with some help from ChatGPT along the way.

Goal

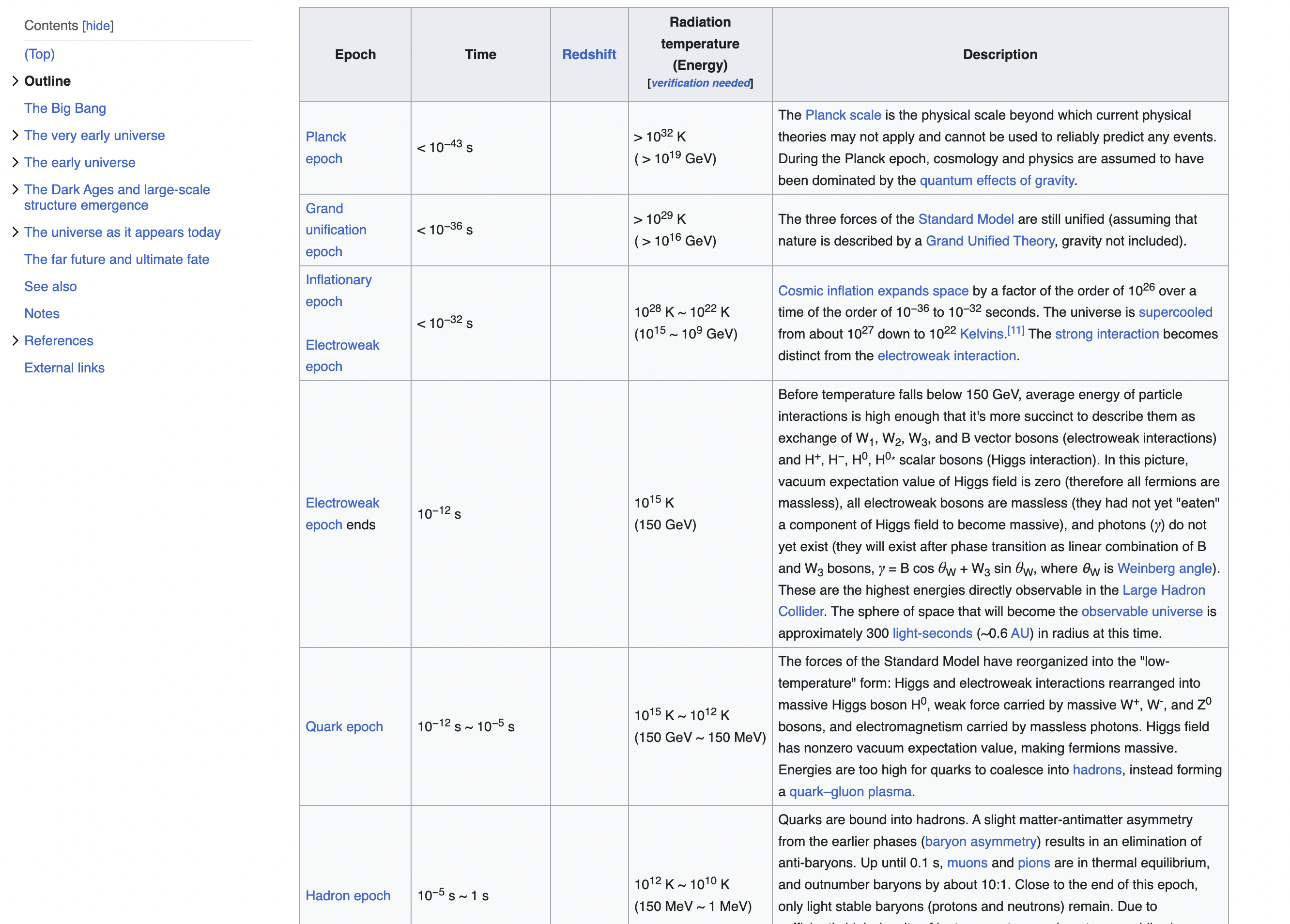

The goal is to get the chronology of the universe located in this Wikipedia page https://en.wikipedia.org/wiki/Chronology_of_the_universe

There is a nice table in the page that gives you the timeline of events and various epochs…

Step 1: The first task is to download the page. Lets ask ChatGPT for help here with this prompt…

give me a script to download this page in python... https://en.wikipedia.org/wiki/Chronology_of_the_universe

import beautiful soupChatGPT should give you this code…

import requests

from bs4 import BeautifulSoup

url = "https://en.wikipedia.org/wiki/Chronology_of_the_universe"

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36"

}

response = requests.get(url, headers=headers)

if response.status_code == 200:

# Parse the page content

soup = BeautifulSoup(response.text, 'html.parser')

# Save the parsed HTML content to a local file

with open("chronology_of_the_universe.html", "w", encoding='utf-8') as file:

file.write(str(soup))

print("Page has been downloaded and saved successfully!")

else:

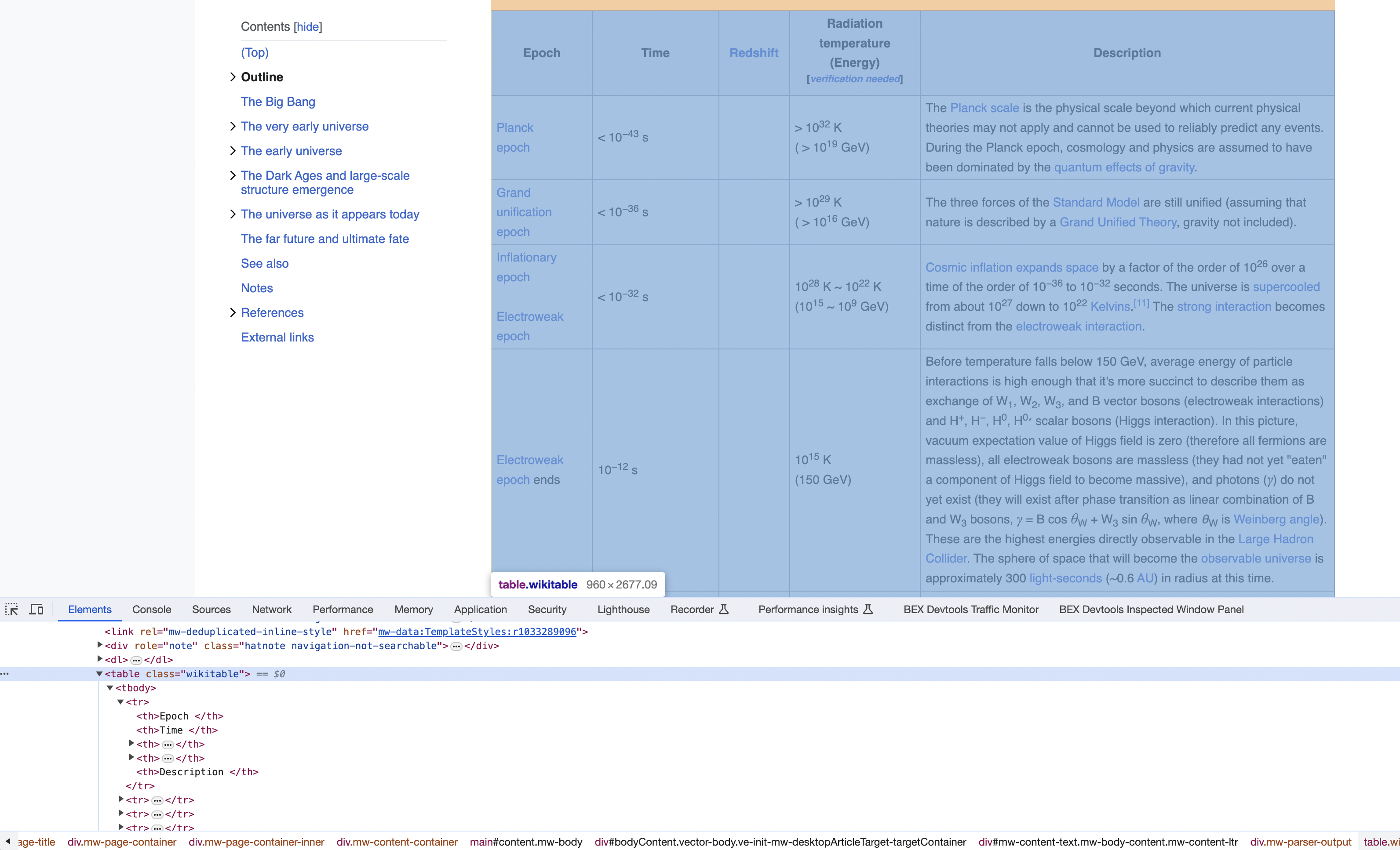

print("Failed to retrieve the webpage. Status Code:", response.status_code)Step 2: Inspect the HTML and look for hints that we can pass to chatGPT. In this case, you can see that the whole table has a class named wikitable

Step 3: Help out ChatGPT with this info. Here is the prompt

I want the script to extract a table with the class wikitable - The <th> contains the headings. Extract each row and columns into a csv fileChatGPT should return the following code…

import requests

import csv

from bs4 import BeautifulSoup

url = "https://en.wikipedia.org/wiki/Chronology_of_the_universe"

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36"

}

response = requests.get(url, headers=headers)

if response.status_code == 200:

soup = BeautifulSoup(response.text, 'html.parser')

# Find the first table with class 'wikitable'

table = soup.find('table', class_='wikitable')

if table:

# Extract column headers

headers = [header.get_text(strip=True) for header in table.find_all('th')]

# Open a CSV file for writing

with open('wikitable.csv', 'w', newline='', encoding='utf-8') as file:

writer = csv.writer(file)

# Write the headers to the CSV file

writer.writerow(headers)

# Loop over the rows and write to the CSV file

for row in table.find_all('tr'):

columns = row.find_all('td')

if columns:

writer.writerow([column.get_text(strip=True) for column in columns])

print('Table has been extracted and saved to wikitable.csv successfully!')

else:

print('No table with class "wikitable" found.')

else:

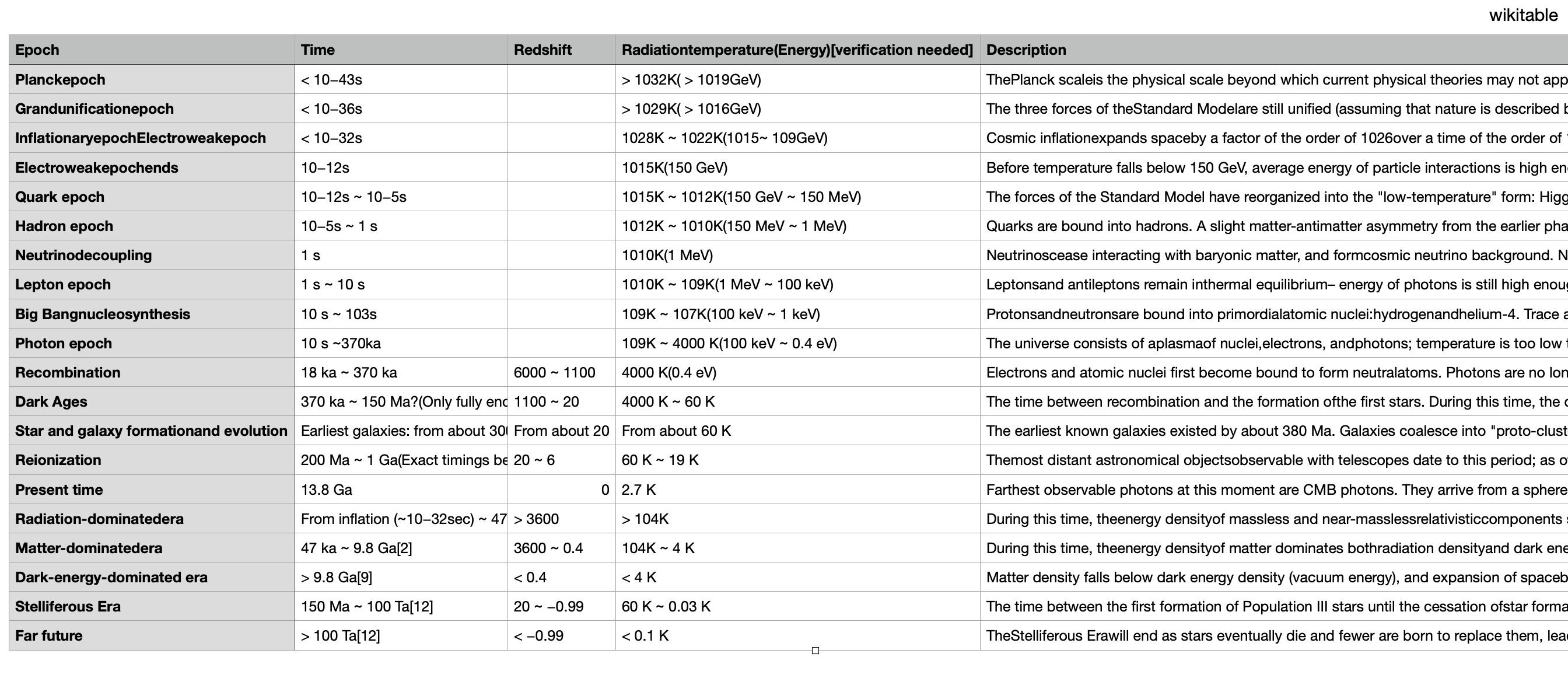

print("Failed to retrieve the webpage. Status Code:", response.status_code)and when you run it, it creates a CSV file which contains the following data…

This is neat. right?

notice the code. It has modified it to include this line…

table = soup.find('table', class_='wikitable')the find function of beautiful soup library is used a lot in web scraping as you can simply tell it to get any element with an ID or class name.

Best Practices for Web Scraping

When scraping websites, keep in mind:

Conclusion

Some key points:

Web scraping allows gathering valuable data from websites at scale. With Python and a bit of help from ChatGPT, you can build scrapers to extract the information you need.

ChatGPT heralds an exciting new era in intelligent automation!

However, this approach also has some limitations:

A more robust solution is using a dedicated web scraping API like Proxies API

With Proxies API, you get:

With features like automatic IP rotation, user-agent rotation and CAPTCHA solving, Proxies API makes robust web scraping easy via a simple API:

curl "https://api.proxiesapi.com/?key=API_KEY&url=targetsite.com"

Get started now with 1000 free API calls to supercharge your web scraping!