pip3 install beautifulsoup4

We will also need the libraries requests, lxml and soupsieve to fetch data, break it down to XML, and to use CSS selectors. Install them using.

pip3 install requests soupsieve lxml

Once installed open an editor and type in.

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requestsNow let's go to the Yelp San Fransisco restaurants listing page and inspect the data we can get.

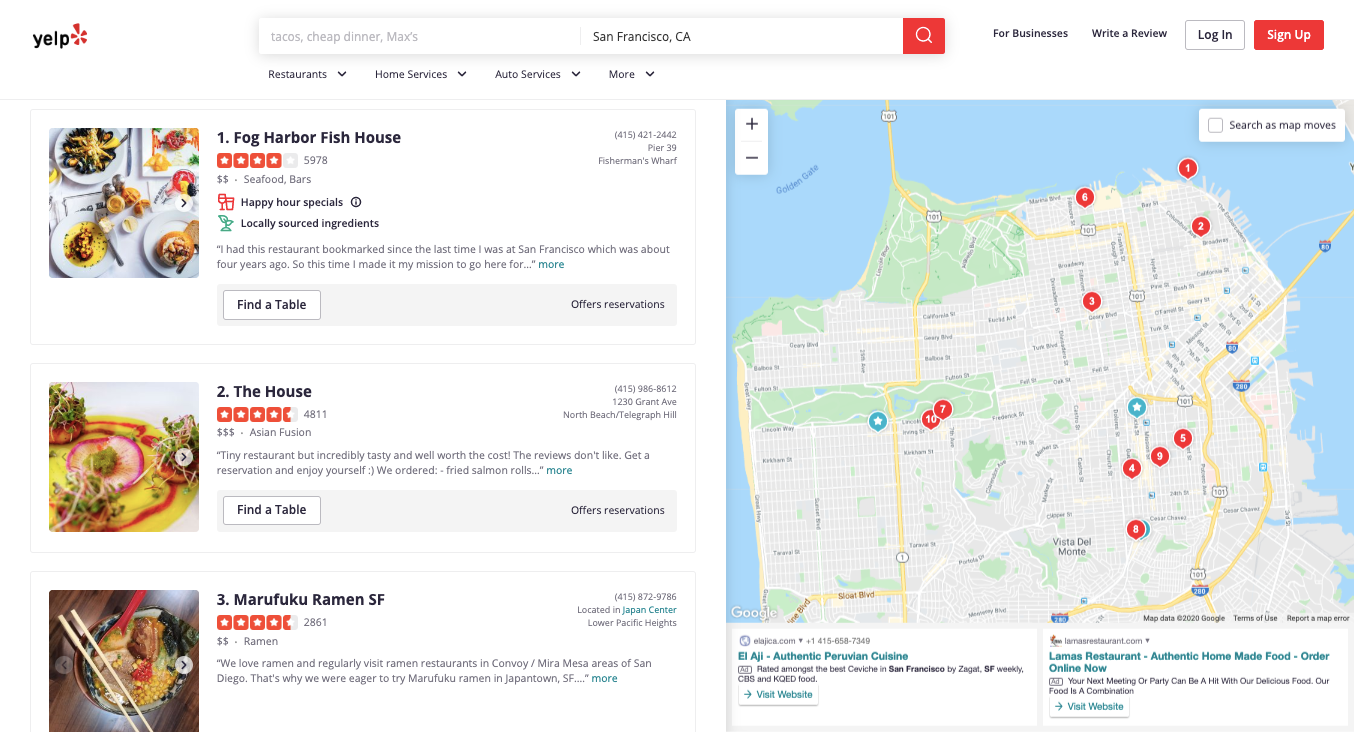

This is how it looks:

Back to our code now. Let's try and get this data by pretending we are a browser like this.

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requests

headers = {'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/601.3.9 (KHTML, like Gecko) Version/9.0.2 Safari/601.3.9'}

url='https://www.yelp.com/search?cflt=restaurants&find_loc=San Francisco, CA'

response=requests.get(url,headers=headers)

print(response)Save this as yelp_bs.py.

If you run it.

python3 yelp_bs.pyYou will see the whole HTML page

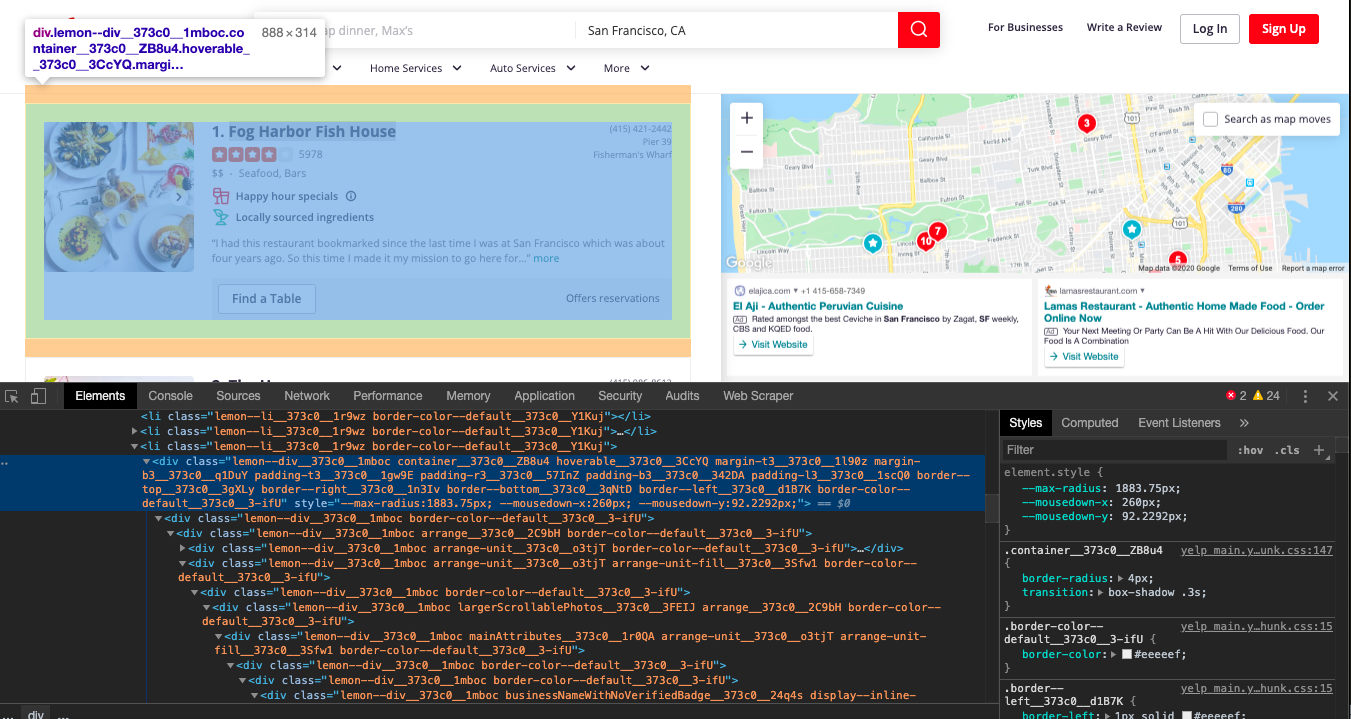

Now, let's use CSS selectors to get to the data we want. To do that let's go back to Chrome and open the inspect tool.

We notice that all the individual rows of data are contained in a

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requests

headers = {'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/601.3.9 (KHTML, like Gecko) Version/9.0.2 Safari/601.3.9'}

url='https://www.yelp.com/search?cflt=restaurants&find_loc=San Francisco, CA'

response=requests.get(url,headers=headers)

soup=BeautifulSoup(response.content,'lxml')

for item in soup.select('[class*=container]'):

try:

print(item)

except Exception as e:

raise e

print('')This prints all the content in each of the containers that hold the restaurant data.

We now can pick out classes inside these rows that contain the data we want. We notice that the title is inside a tag. We select this but also do all other selections under this protective umbrella. This is because in the selection above the class container might be used to contain other things other than the data we want. So to be sure, we make sure that there is a tag in there before we scrape other pieces of data.

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requests

headers = {'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/601.3.9 (KHTML, like Gecko) Version/9.0.2 Safari/601.3.9'}

url='https://www.yelp.com/search?cflt=restaurants&find_loc=San Francisco, CA'

response=requests.get(url,headers=headers)

soup=BeautifulSoup(response.content,'lxml')

for item in soup.select('[class*=container]'):

try:

#print(item)

if item.find('h4'):

name = item.find('h4').get_text()

print(name)

print('------------------')

except Exception as e:

raise e

print('')

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requests

headers = {'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/601.3.9 (KHTML, like Gecko) Version/9.0.2 Safari/601.3.9'}

url='https://www.yelp.com/search?cflt=restaurants&find_loc=San Francisco, CA'

response=requests.get(url,headers=headers)

soup=BeautifulSoup(response.content,'lxml')

for item in soup.select('[class*=container]'):

try:

#print(item)

if item.find('h4'):

name = item.find('h4').get_text()

print(name)

print('------------------')

except Exception as e:

raise e

print('')

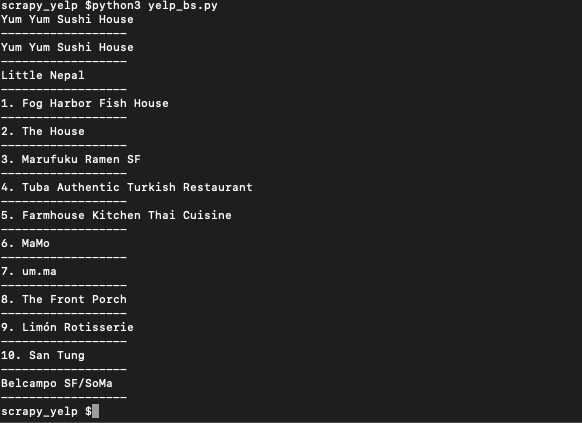

If you run it it will print out all the names.

Bingo!! we got the names...

Now with the same process, we get the other data like phone, address, review count, ratings, price range etc...

# -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import requests

headers = {'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_2) AppleWebKit/601.3.9 (KHTML, like Gecko) Version/9.0.2 Safari/601.3.9'}

url='https://www.yelp.com/search?cflt=restaurants&find_loc=San Francisco, CA'

response=requests.get(url,headers=headers)

soup=BeautifulSoup(response.content,'lxml')

for item in soup.select('[class*=container]'):

try:

#print(item)

if item.find('h4'):

name = item.find('h4').get_text()

print(name)

print(soup.select('[class*=reviewCount]')[0].get_text())

print(soup.select('[aria-label*=rating]')[0]['aria-label'])

print(soup.select('[class*=secondaryAttributes]')[0].get_text())

print(soup.select('[class*=priceRange]')[0].get_text())

print(soup.select('[class*=priceCategory]')[0].get_text())

print('------------------')

except Exception as e:

raise e

print('')

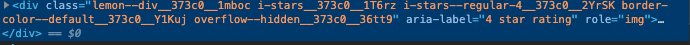

Notice the trickery we employ when we want to get the ratings... We know that the ratings are hidden as a label in this div

so we get it with this line.. it selects the element with the attribute aria-label but only ones that have the word label in them and then goes onto to ask for its aria-label attribute value... loved writing this one...

print(soup.select('[aria-label*=rating]')[0]['aria-label'])

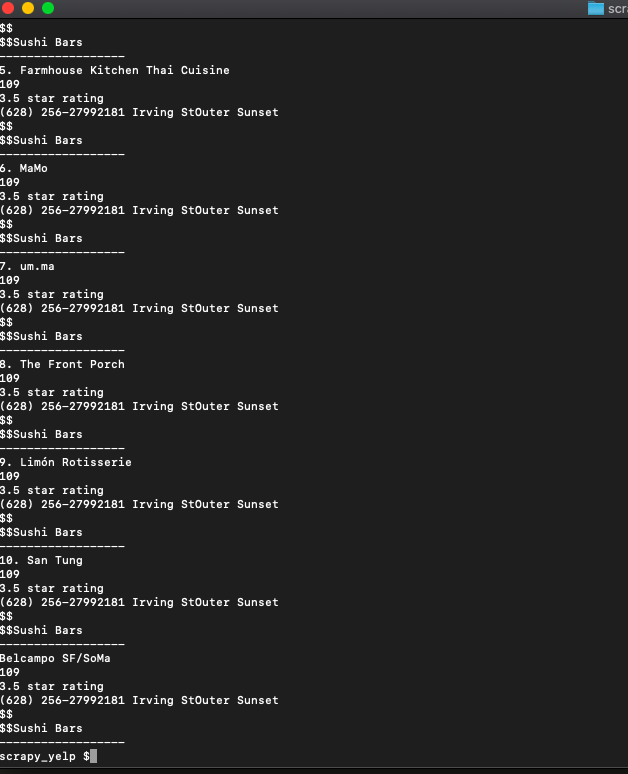

When we run it it will print out every detail we want like this...

We even added a separator to show where each restaurant detail ends. You can now pass this data into an array or save it to CSV and do whatever you want.

Otherwise, you tend to get IP blocked a lot by automatic location, usage, and bot detection algorithms.

Our rotating proxy server Proxies API provides a simple API that can solve all IP Blocking problems instantly.

- With millions of high speed rotating proxies located all over the world,

- With our automatic IP rotation

- With our automatic User-Agent-String rotation (which simulates requests from different, valid web browsers and web browser versions)

- With our automatic CAPTCHA solving technology,

Hundreds of our customers have successfully solved the headache of IP blocks with a simple API.

The whole thing can be accessed by a simple API like below in any programming language.

curl "http://api.proxiesapi.com/?key=API_KEY&url=https://example.com"

We have a running offer of 1000 API calls completely free. Register and get your free API Key here.