Web scraping is a useful technique to programmatically extract data from websites. Often you need to scrape multiple pages from a site to gather complete information. In this article, we will see how to scrape multiple pages in PHP using the Simple HTML DOM library.

Prerequisites

To follow along, you'll need:

include('simple_html_dom.php');

Define Base URL

—

We'll scrape a blog -

<https://copyblogger.com/blog/>

<https://copyblogger.com/blog/page/2/>

<https://copyblogger.com/blog/page/3/>

Let's define the base URL pattern:

$base_url = '<https://copyblogger.com/blog/page/{}/>';

The

Specify Number of Pages

Next, we'll specify how many pages to scrape. Let's scrape the first 5 pages:

$num_pages = 5;

Loop Through Pages

We can now loop from 1 to

for ($page_num = 1; $page_num <= $num_pages; $page_num++) {

// Construct page URL

$url = sprintf($base_url, $page_num);

// Code to scrape each page

}

Send Request and Check Response

Inside the loop, we'll use

We'll check that the response is not false to ensure the request succeeded:

$response = file_get_contents($url);

if ($response !== false) {

// Scrape page

} else {

echo "Failed to retrieve page $page_num";

}

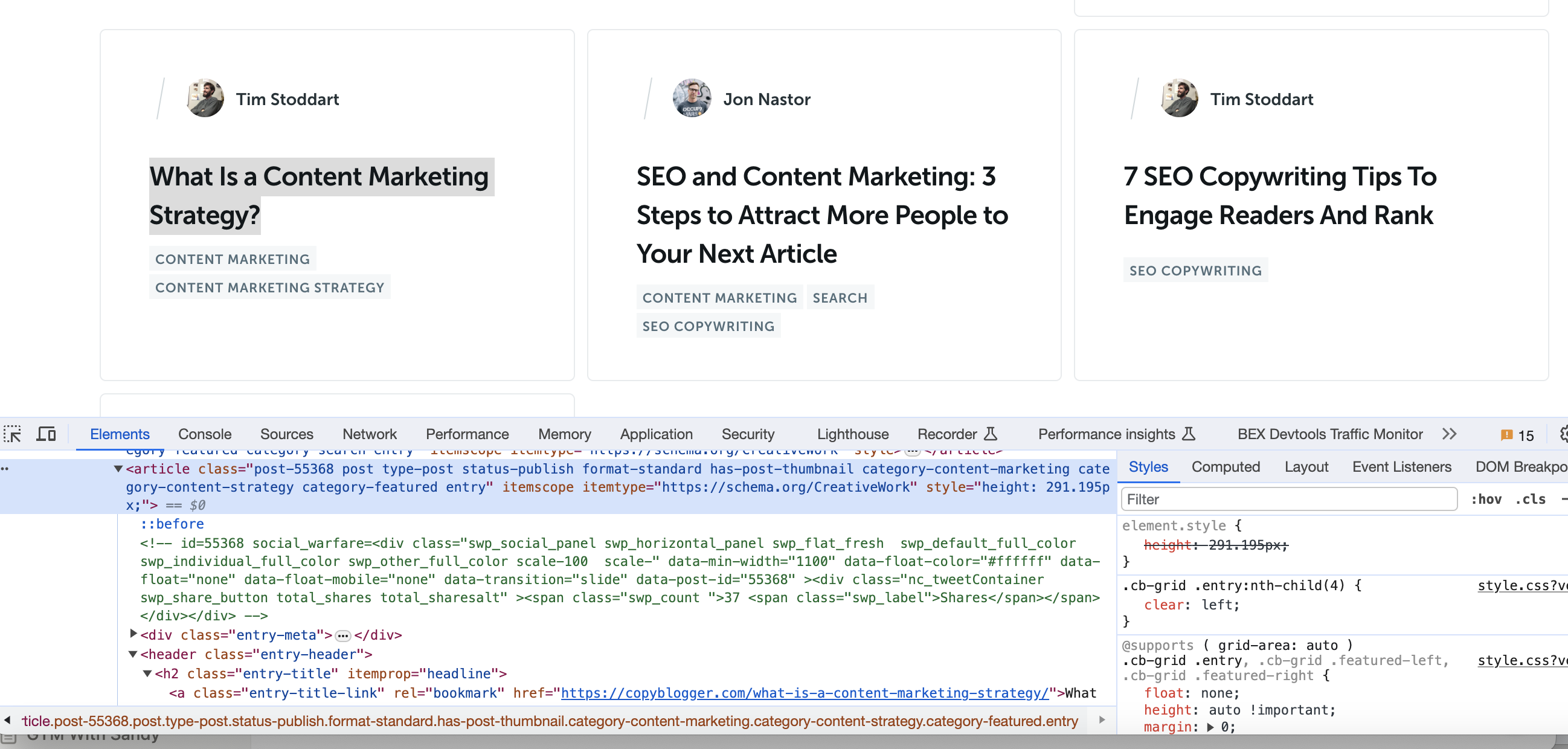

Parse HTML Using DOM Parser

If the request succeeds, we can parse the HTML using the DOM parser:

$html = str_get_html($response);

This gives us a DOM object to extract data from.

Extract Data

Now within the loop we can use the

For example, to get all article elements:

$articles = $html->find('article');

We can loop through

Full Code

The full code to scrape 5 pages is:

include('simple_html_dom.php');

$base_url = '<https://copyblogger.com/blog/page/{}/>';

$num_pages = 5;

for ($page_num = 1; $page_num <= $num_pages; $page_num++) {

$url = sprintf($base_url, $page_num);

$response = file_get_contents($url);

if ($response !== false) {

$html = str_get_html($response);

$articles = $html->find('article');

foreach ($articles as $article) {

// Get title

$title = $article->find('h2.entry-title', 0)->plaintext;

// Get URL

$url = $article->find('a.entry-title-link', 0)->href;

// Get author

$author = $article->find('div.post-author a', 0)->plaintext;

// Get categories

$categories = array();

foreach ($article->find('div.entry-categories a') as $cat) {

$categories[] = $cat->plaintext;

}

// Print data

echo "Title: $title";

echo "URL: $url";

echo "Author: $author";

echo "Categories: " . implode(", ", $categories);

echo "\\n";

}

} else {

echo "Failed to retrieve page $page_num";

}

}

This allows us to scrape and extract data from multiple pages sequentially. The full code can be extended to scrape any number of pages.

Summary

Web scraping enables collecting large datasets programmatically. With the techniques here, you can scrape and extract information from multiple pages of a site in PHP.

While these examples are great for learning, scraping production-level sites can pose challenges like CAPTCHAs, IP blocks, and bot detection. Rotating proxies and automated CAPTCHA solving can help.

Proxies API offers a simple API for rendering pages with built-in proxy rotation, CAPTCHA solving, and evasion of IP blocks. You can fetch rendered pages in any language without configuring browsers or proxies yourself.

This allows scraping at scale without headaches of IP blocks. Proxies API has a free tier to get started. Check out the API and sign up for an API key to supercharge your web scraping.

With the power of Proxies API combined with Python libraries like Beautiful Soup, you can scrape data at scale without getting blocked.